For most of us, the problem isn't a lack of information-it's too much of it. We follow a dozen RSS feeds, subscribe to twenty Telegram channels, and check Hacker News every morning. The result is "information fatigue." The solution isn't to stop following these sources, but to change how we consume them. By using a pipeline that pulls data, processes it with AI, and pushes it to a channel, you can effectively outsource your news curation to a machine.

The Pull-Process-Push Architecture

To get this working, you don't need a massive server farm. Most successful implementations follow a simple three-stage pipeline. First, the system performs the "Pull" stage. This is where the automation connects to RSS feeds, which are standardized web feeds that allow users and applications to access updates to websites in a computer-readable format. Whether it's Google News, a tech blog, or a government portal, the system scrapes the latest headlines and links.

Next comes the "Process" stage, where the real magic happens. This is where Artificial Intelligence is used to filter out the junk and group related stories. If three different outlets report on a new OpenAI model release, the AI recognizes they are talking about the same event. It clusters these articles together, deduplicates the information, and creates a single, unified summary. This prevents the "echo chamber" effect where you see the same story repeated endlessly.

Finally, the "Push" stage takes that polished summary and sends it to a Telegram Bot. These bots act as the delivery vehicle, posting the formatted briefing to a specific chat ID at a time you've predetermined-like 8:00 AM every weekday.

Different Ways to Build Your News Engine

Depending on your budget and technical skill, there are a few ways to set this up. Some people prefer a "zero-cost" approach. This usually involves a Python script running on a time-based job scheduler known as cron. These systems can pull from dozens of feeds and post them in a clean format without using expensive API calls. They are fast and reliable, though they lack the nuanced summarization that a Large Language Model (LLM) provides.

If you want higher quality, you can integrate OpenAI GPT models. By sending the clustered text to the OpenAI API, the system can synthesize multiple perspectives. For example, if one source claims a new product is "revolutionary" and another calls it "overpriced," the AI can highlight this contradiction in your briefing. This gives you a more balanced view of the news than a simple aggregation tool ever could.

For those who hate coding, no-code platforms like n8n offer a visual way to build these workflows. You essentially drag and drop "nodes" to connect your RSS feed to your AI model and then to your Telegram bot. It's a great middle ground for marketers or product managers who want the power of AI without writing 500 lines of code.

| Method | Cost | Complexity | Summary Quality | Best For |

|---|---|---|---|---|

| Python + Cron | Free | Medium | Low (Basic) | Budget-conscious devs |

| GPT-Powered API | Usage-based | Medium | High (Nuanced) | Professional analysts |

| n8n / No-Code | Subscription | Low | Variable | Non-technical users |

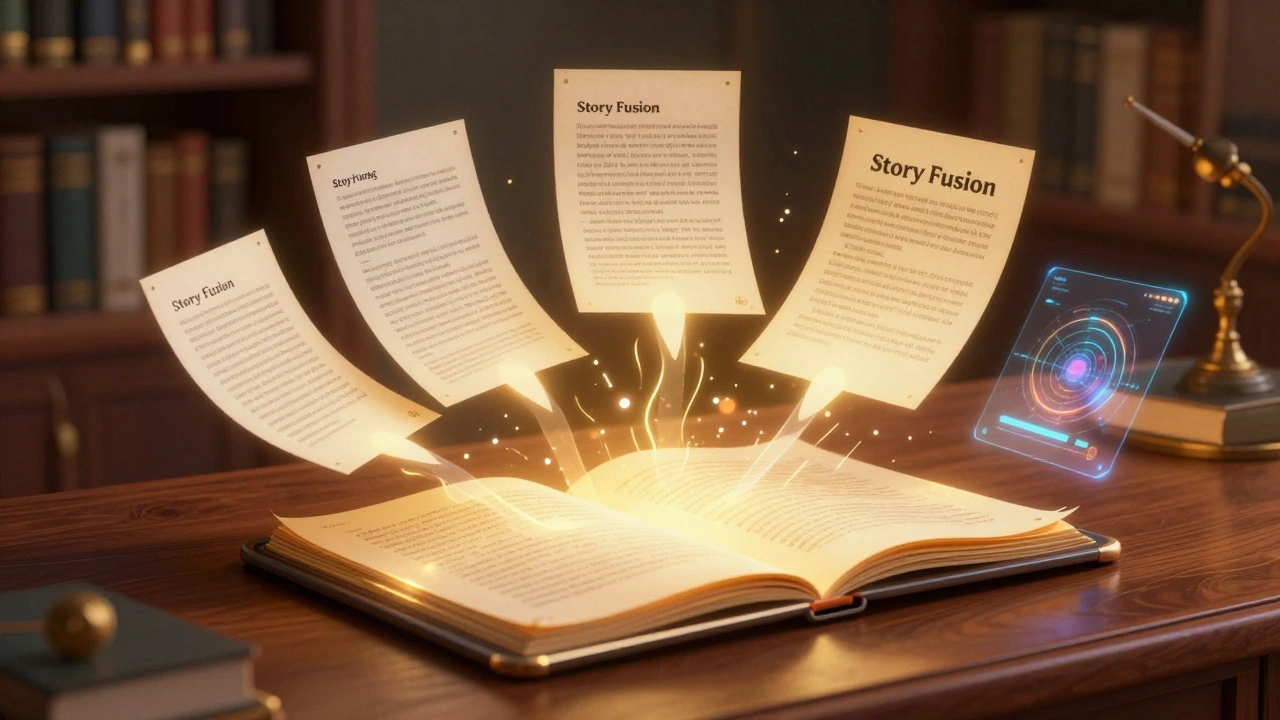

Mastering the Art of Story Fusion

The most advanced part of this process is "multi-source story fusion." Simple aggregators just list links. Story fusion, however, treats multiple articles as a single data point. The AI looks at a cluster of five articles and asks: "What are the common facts? Where do they disagree? What is the most important takeaway?"

This approach is vital for breaking news. When a major event happens, the first report is often incomplete. The second report adds a detail; the third corrects a mistake. A fused summary incorporates all these updates into one coherent paragraph. Instead of reading five versions of the same story, you get the definitive version. To make this work, prompt engineering is key. You have to tell the AI specifically to "identify contradictions" or "synthesize multiple viewpoints" to ensure the output isn't just a generic summary.

Scheduling for Maximum Impact

A briefing is only useful if it hits your inbox when you're actually ready to read it. Most users set up a tiered delivery system. A standard daily digest might fire at 7:00 AM, Monday through Friday, for leisurely morning reading. Then, you might have a weekly roundup every Sunday evening to catch the bigger trends you missed during the week.

For high-stakes information, some implement "urgency-based routing." While the daily digest goes to Telegram, a critical breaking news alert-identified by the AI as having a high impact score-might be routed to a different channel or even a different platform like WhatsApp for an immediate notification. This ensures you aren't ignoring a market-shifting piece of news just because it's tucked away in a 7:00 AM digest.

Common Pitfalls and How to Avoid Them

It sounds perfect, but these systems have a few weak points. The biggest is "RSS fragility." Some websites change their feed structure or shut down their RSS entirely, which can break your entire pipeline. It's smart to have a monitoring system that alerts you if a feed stops returning data for more than 24 hours.

Another issue is "misclustering." Sometimes an AI sees two articles mentioning "Apple" and assumes they are about the same thing, even if one is about the iPhone and the other is about agricultural exports from Washington state. To fix this, you can implement a hybrid model-similar to the OpenClaw Newsroom approach-where a human editor spends five minutes reviewing the clusters before they are published. This 7-step hybrid process combines the speed of AI with the accuracy of human judgment.

Finally, be mindful of your API costs. If you are processing 20 feeds every 30 minutes using a heavy GPT-4 model, those pennies add up. Using a smaller, faster model for the initial filtering and only using the "smart" model for the final synthesis can save you a significant amount of money without sacrificing quality.

Do I need to be a coder to set this up?

Not necessarily. While a Python script is the most flexible way, tools like n8n allow you to build these workflows visually. There are also specialized bots like Junction Bot that handle the monitoring and summarization of Telegram channels directly, which requires almost zero technical setup.

How does the AI know which stories are related?

The AI uses a process called clustering. It analyzes the keywords, entities (like company names or people), and the overall semantic meaning of the headlines. If the core concepts overlap significantly, the AI groups them into a single cluster for summarization.

Will this work for private Telegram channels?

Standard RSS-based systems only work with public feeds. However, specialized tools like Junction Bot can monitor both public and private channels, provided the bot has been granted the necessary access permissions by the channel administrator.

Is there a way to keep the cost at $0?

Yes. By using Python, free RSS feeds, and a local cron job for scheduling, you can run a news aggregator for free. The only "cost" is the time it takes to write the script. You only start paying when you introduce external AI APIs like OpenAI for advanced summarization.

How often should the system run to avoid missing news?

For most people, a 30-minute interval is plenty. This ensures the data is fresh without overloading your API limits or spamming your Telegram channel. If you are tracking high-volatility markets, you might move to a 15-minute window.

Next Steps for Implementation

If you're ready to start, begin by listing your 5-10 most trusted RSS sources. Create a bot through @BotFather on Telegram to get your API token and chat ID. If you're a developer, start with a basic Python script using the `feedparser` library. If you're not a coder, try setting up a free account on n8n and look for "AI news monitoring" templates. Start with a simple daily digest, and once you're comfortable, add advanced clustering and urgency-based routing to fine-tune your information flow.