Telegram boasts over 800 million users worldwide, and for many, it’s the go-to app for private conversations, activist networks, and niche communities. But behind its sleek interface and strong encryption promises lies a growing crisis: how do you keep a platform open to everyone while stopping criminals, extremists, and scammers from turning it into a lawless zone?

Telegram’s Design Makes Moderation Nearly Impossible

Unlike Facebook or X, Telegram doesn’t scan your private messages. That’s by design. The app only uses end-to-end encryption in Secret Chats, which most users never enable. Regular chats are encrypted between your device and Telegram’s servers-but Telegram holds the keys. That means, technically, they could read your messages. But they say they don’t. And even if they wanted to, their server network is spread across multiple countries, making legal requests hard to enforce. This architecture was built for freedom. It’s why dissidents in Iran and Russia still use Telegram to organize. But it’s also why drug dealers, terrorist recruiters, and scam artists thrive. A single public channel with 200,000 members can spread child abuse material or instructions for building bombs in minutes. And once it’s gone viral, it’s nearly impossible to fully delete. Even when Telegram removes a channel, it often reappears under a new name within hours.The Numbers Don’t Lie

In 2023, Telegram removed 9.2 million channels for illegal content-a 47% jump from the year before. That sounds like action. But consider this: they have only 120 full-time staff handling safety for 800 million users. That’s one moderator for every 6.67 million people. Meta, by comparison, spends billions and employs one moderator per 25,000 users. Europol found that 73% of terrorist communications they tracked in 2022 happened on Telegram. The platform has blocked over 2.1 million terror-related channels in 2023 alone. Yet researchers say 68% of those banned channels come back within three days. It’s a game of whack-a-mole, and Telegram is playing with one hand tied behind its back.What’s Being Done-And What’s Not

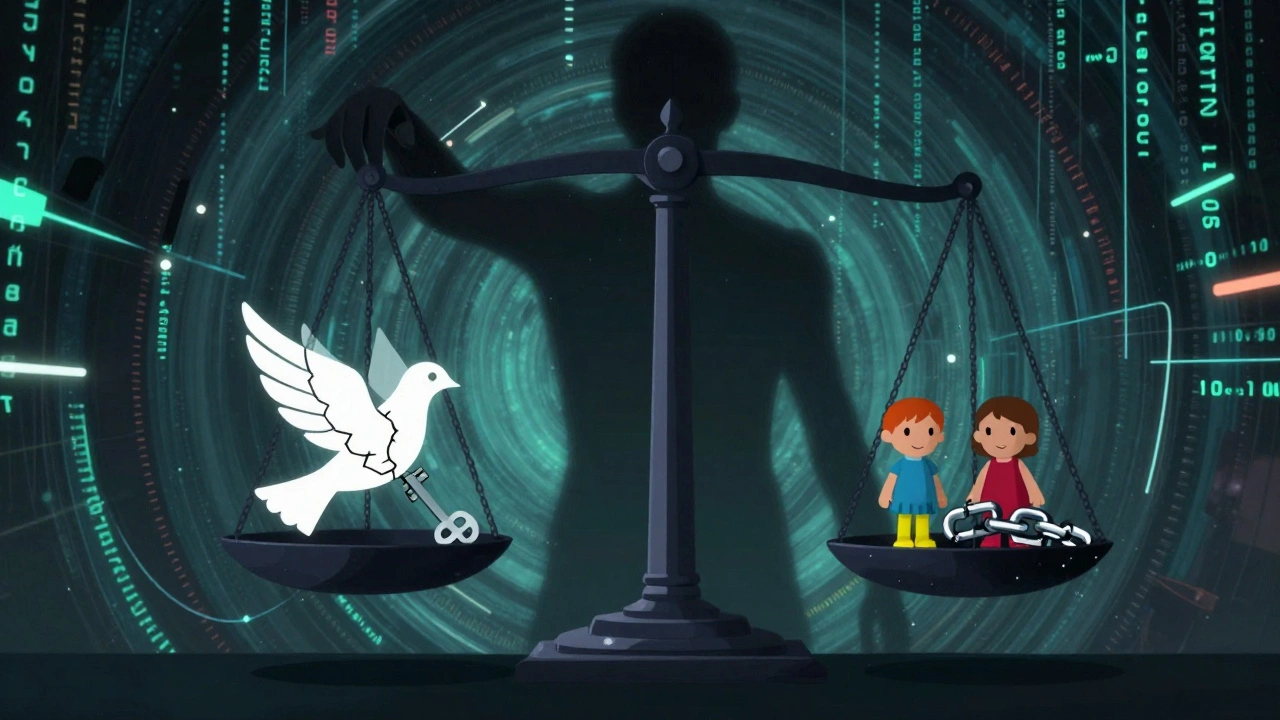

Telegram has made some changes. Since 2021, it scans public channels for known child abuse images using a database from the Internet Watch Foundation. In September 2024, it automatically removed 1.2 million extremist channels using AI. It also launched a “Safety Check” feature that lets users see if a channel has been verified by community reports. But here’s the catch: none of this applies to private chats. Not group DMs. Not encrypted conversations. Not even secret chats. If you’re in a private group sharing illegal content, Telegram won’t see it. And they won’t try. Their stance is clear: if they build a backdoor for one government, authoritarian regimes will use it to silence journalists. That’s why Pavel Durov was arrested in France in August 2024. Authorities didn’t accuse him of doing anything illegal himself. They accused Telegram of enabling it. And they weren’t alone. The EU’s Digital Services Act now requires platforms with over 45 million users in Europe to act against illegal content-or pay up to 6% of their global revenue in fines. Telegram crossed that threshold in early 2024.

Users Are Split-And So Are Experts

On Reddit, users praise Telegram for protecting their privacy. One Iranian activist wrote: “I’ve used Telegram for five years to organize protests without revealing my number-this saved my life.” That’s real. That’s vital. But on r/Scams, users report a 300% spike in crypto fraud since 2022. Fake investment channels promise 500% returns. They vanish with your money. And because Telegram doesn’t verify accounts, you can’t tell if the person running the channel is real. Cybersecurity experts are torn. Some call Telegram’s model heroic. Others call it reckless. Alex Chen, a security engineer, put it bluntly: “I respect their tech. But letting 200k-member groups spread CSAM in minutes? That’s not freedom. That’s negligence.”Regulators Are Closing In

The U.S. is pushing the EARN IT Act, which could strip legal protections from platforms that don’t scan private messages. If passed, Telegram would have to choose: weaken encryption or lose immunity from lawsuits. Telegram’s response? A firm no. Durov posted in August 2024: “Compromising encryption for any reason creates vulnerabilities that authoritarian regimes will exploit to target journalists and activists.” That’s a powerful argument. But it ignores a grim reality: the same encryption protecting dissidents is also protecting child predators. And the people most hurt by Telegram’s hands-off policy aren’t the activists-they’re the victims.

What Can You Do?

If you use Telegram, here’s what matters:- Use Secret Chats for anything sensitive. They’re end-to-end encrypted and self-destruct. But remember-they’re not the default.

- Report everything. If you see a channel promoting violence, scams, or abuse, report it. Telegram’s system works, but only if users use it.

- Don’t trust anonymous channels. If someone offers “guaranteed” crypto returns or asks for your private key, it’s a scam. No legitimate service will ever ask for that.

- Check Safety Ratings. New channels have a “Safety Check” badge. If it’s missing or low, tread carefully.

The Future Is Unclear

Telegram’s user growth is still strong-up 22% year over year in 2024. People want privacy. They want control. They don’t want ads. They don’t want Meta watching them. But governments aren’t backing down. The EU is preparing stricter rules. The U.S. is considering laws that could force Telegram to change its core architecture. And if Durov’s arrest is any sign, the next step could be a global crackdown. Telegram’s model might not survive the next five years. Or it might. But one thing is certain: the tension between freedom and safety isn’t going away. And no app, no matter how well-designed, can solve it alone.Is Telegram really encrypted?

Telegram only uses end-to-end encryption in "Secret Chats," which must be manually enabled. Regular chats are encrypted between your device and Telegram’s servers, but Telegram holds the encryption keys-meaning they could technically access your messages. This is different from apps like Signal, which encrypt all messages end-to-end by default.

Why does Telegram allow illegal content to spread?

Telegram doesn’t actively scan private messages or most group chats due to its privacy-first design. It relies on user reports and AI scanning for known illegal content in public channels. But with over 800 million users and a decentralized server structure, moderation is extremely limited. The platform prioritizes resisting government censorship over preventing abuse, even when that means letting harmful content stay online longer.

Can law enforcement access Telegram messages?

Law enforcement can request data from Telegram, but the platform only provides limited information-like IP addresses and phone numbers linked to accounts. They cannot access the content of private chats, even with a court order, because Telegram doesn’t store message content on its servers for non-Secret Chats. For Secret Chats, the content is never stored at all. This makes Telegram one of the hardest platforms for authorities to investigate.

What’s the difference between Telegram and Signal?

Signal encrypts all messages by default using end-to-end encryption and doesn’t store any metadata. Telegram offers optional Secret Chats but doesn’t encrypt regular chats that way. Signal has fewer features-no large groups, no bots, no public channels. Telegram is more flexible but less secure by default. If privacy is your top priority, Signal is stronger. If you need large groups and channels, Telegram is more powerful-but riskier.

Is Telegram safe for activism?

Yes, but only if you use Secret Chats and avoid public channels. Telegram has been used successfully by activists in Iran, Russia, and Hong Kong because it hides phone numbers and resists government bans. However, public channels can be monitored, and user reports can lead to doxxing. Always assume anything you post publicly can be traced. For high-risk activism, combine Telegram with other tools like Tor and burner devices.

Why did Telegram’s CEO get arrested?

Pavel Durov was arrested in France in August 2024 under suspicion of failing to prevent illegal activities on Telegram, including drug trafficking, fraud, and the spread of child abuse material. French authorities argue that as CEO, he has legal responsibility for the platform’s moderation failures. Durov denies wrongdoing, claiming Telegram’s design makes full moderation impossible without sacrificing user privacy. His arrest marks a turning point in global efforts to hold messaging platforms accountable.

Should I stop using Telegram?

It depends on how you use it. If you stick to private conversations using Secret Chats and avoid suspicious public channels, Telegram is still one of the most secure tools for privacy-conscious users. But if you join large public groups, follow anonymous influencers, or use it for financial transactions, you’re at higher risk of scams and exposure to illegal content. Use it wisely, and always assume public content is not safe.