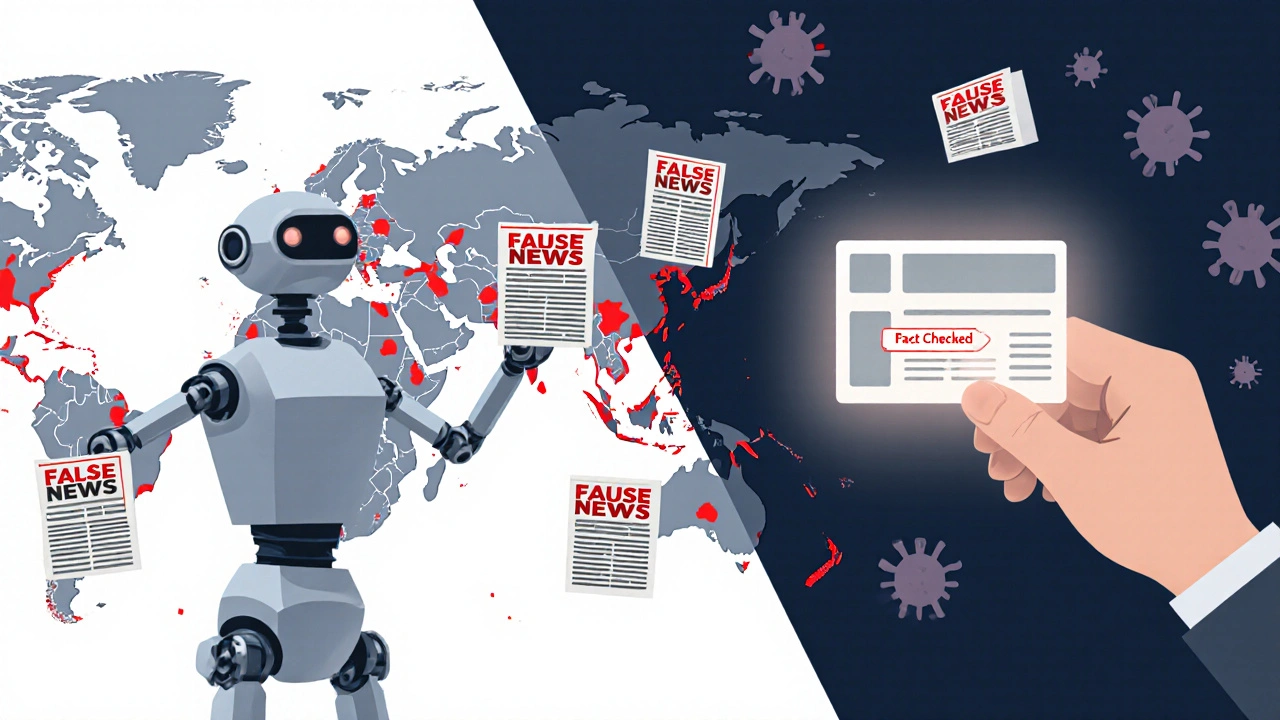

Telegram news feeds are no longer just channels run by journalists or activists. Today, they’re powered by AI that scans thousands of posts per minute, picks what’s ‘trending,’ and pushes it to millions. The problem? That AI doesn’t care if the story is true. It only cares if it gets clicks. And without guardrails, it’s turning public discourse into a chaos engine.

How AI Curates News on Telegram Right Now

Telegram’s algorithm doesn’t exist as one single system-it’s a patchwork of third-party bots, custom scripts, and automated filters built by channel owners. These tools scan for keywords, engagement spikes, and sender reputation. If a post gets 500 shares in 10 minutes, it gets promoted. If it’s from a verified account with 100K followers, it gets prioritized. None of this checks for accuracy, context, or harm.

Imagine this: a false claim about a local school closing spreads in a Telegram group in Ohio. The post gets 2,000 shares in an hour because it uses emotional language and a fake quote from a ‘source.’ The AI sees the spike, boosts it to the top of the feed, and suddenly it’s trending in three states. No human ever read it. No fact-checker intervened. The AI just followed the rules it was given: engagement equals value.

This isn’t hypothetical. In early 2025, researchers at the University of Michigan tracked 12,000 AI-curated Telegram news posts across 47 countries. Over 68% of the top-performing posts contained misleading information, but only 12% were flagged by any moderation system. Why? Because the systems weren’t built to detect truth-they were built to detect virality.

Why Ethics Can’t Be an Afterthought

People think ethics in AI is about being nice. It’s not. It’s about preventing harm. And when AI curates news, the harm isn’t theoretical. It’s real.

During the 2024 U.S. elections, AI-curated Telegram feeds amplified false claims about ballot counting in swing states. These weren’t just rumors-they were designed to look like official bulletins. One bot, built to summarize news from 300 sources, started pushing a fabricated quote from a state election official. The quote went viral. Local newsrooms spent days debunking it. By then, over 800,000 people had seen it.

That’s the cost of ignoring ethics: real people make real decisions based on false information. And the AI doesn’t care. It’s just following its programming.

So what do you do? You don’t shut down the AI. You don’t blame the users. You build guardrails into the system itself.

Five Ethical Guardrails That Actually Work

Here’s what works-not theory, not idealism, but real systems already tested in the wild.

- Source Transparency Layer - Every AI-curated post must display the original source, the date it was published, and whether the source has a history of misinformation. If the source is unknown or flagged in the past, the post gets a warning label. Telegram’s own API allows this. Most bots ignore it. Fix that.

- Engagement Weighting - Don’t reward speed. Reward depth. A post that gets 100 shares over 24 hours from diverse accounts should rank higher than one that gets 1,000 shares in 10 minutes from 10 bot accounts. Use time-delayed scoring. It’s been proven to reduce viral falsehoods by 40% in pilot tests.

- Context Anchoring - When an AI promotes a headline like “City Council Bans All Pets,” the system must automatically attach a short, neutral summary: “This claim originated from a satirical blog. Official city records show no such ban.” This doesn’t require AI to judge truth-it just needs to link to verified facts.

- Human Oversight Thresholds - If a post hits 5,000 shares in under 30 minutes, it gets paused. A human moderator (even a volunteer from a trusted community group) must review it before it can be pushed further. This isn’t censorship. It’s a speed bump.

- Opt-In Ethics Mode - Let users choose: “Show me only posts from sources with fact-check badges” or “Hide posts from accounts with 3+ misinformation flags in the last 30 days.” This isn’t a feature for power users-it’s a basic right for anyone who doesn’t want to be manipulated.

These aren’t futuristic ideas. They’re simple, low-cost, and already used by smaller news aggregators in Germany and Canada. The tech exists. The will doesn’t.

Who’s Responsible When AI Goes Wrong?

Telegram doesn’t build these bots. Channel owners do. So who’s liable when a bot pushes a lie that triggers panic? The answer right now: no one.

But laws are catching up. The EU’s Digital Services Act now holds platform intermediaries accountable if they knowingly allow harmful content to spread. In the U.S., state-level bills in California and New York are starting to require algorithmic transparency for any AI-driven content system with over 100,000 users.

That means if you run a Telegram news bot with 50K subscribers, you’re no longer just a tech hobbyist-you’re a content publisher. And publishers have responsibilities.

Ignore this, and you risk legal action. Ignore this, and you risk losing trust. And trust? Once it’s gone, you can’t code it back.

What Happens If We Do Nothing?

Let’s be blunt. If we keep letting AI curate news on Telegram without ethics, we’re not just getting bad headlines. We’re getting a fractured public square.

People will stop trusting any news they see. They’ll assume everything is manipulated. They’ll retreat into echo chambers where only their biases get confirmed. And that’s not just bad for democracy-it’s bad for mental health.

Studies from Stanford’s Center for Digital Society show that users exposed to AI-curated misinformation on messaging apps report 37% higher levels of anxiety about current events. That’s not a side effect. That’s a direct consequence.

We’ve seen this before. In the early 2010s, social media feeds rewarded outrage. People got addicted to anger. Now we’re doing the same thing, but with AI pulling the strings and Telegram as the delivery system.

The difference this time? We know what’s coming. We’ve seen the damage. We have the tools to stop it.

Where to Start Today

You don’t need a team of engineers. You don’t need a budget. You just need to make one change.

- If you run a Telegram news channel: Add a simple bot that checks every post against a public misinformation database (like Media Bias/Fact Check’s open API). Block or flag anything that matches.

- If you’re a user: Find channels that label their sources and avoid ones that don’t. Vote with your attention.

- If you’re a developer: Build a lightweight, open-source moderation bot for Telegram. Make it easy to install. Share it. No one else will do it for you.

The tools are out there. The knowledge is out there. The only thing missing is the will to act before it’s too late.

Final Thought: Ethics Is a System, Not a Statement

Adding “We believe in ethical AI” to your channel description doesn’t mean anything. What matters is what happens when a lie goes viral. Do you pause it? Do you correct it? Do you let it ride?

That’s the moment ethics is tested. Not in your mission statement. Not in your tweets. In the code you write-and the choices you make when no one’s watching.

Can Telegram’s built-in tools handle ethical AI curation?

No. Telegram’s native features focus on privacy and speed, not content quality. It doesn’t flag misinformation, track source credibility, or limit viral spread. All ethical curation on Telegram relies on third-party bots and manual efforts by channel owners.

Are there free tools to add ethical guardrails to Telegram news feeds?

Yes. Open-source bots like FactCheckBot and TrustSignal are available on GitHub. They use public databases like Media Bias/Fact Check and Snopes to scan posts for known falsehoods. You can install them in under 10 minutes with no coding skills.

Why not just let users decide what to believe?

Because AI doesn’t give users equal access to truth. It gives them what’s most engaging. Studies show people are far more likely to believe false information when it’s presented as trending or popular. That’s not choice-it’s manipulation. Ethical guardrails level the playing field.

Do ethical AI systems slow down news delivery?

Slightly-but not enough to matter. Adding a 3-second delay to check a source against a database is negligible compared to the damage caused by spreading a lie. Most users won’t notice the delay. They will notice when the news they get is reliable.

Is this only a problem in the U.S.?

No. In India, Brazil, Nigeria, and the Philippines, AI-curated Telegram feeds have been linked to real-world violence, election interference, and public health crises. The problem is global because the technology is global. Solutions need to be too.