Telegram is a bit of a black box for news editors. Because it relies on closed groups and private channels, disinformation often spreads like wildfire before a traditional newsroom even knows it exists. By the time a fake claim hits a mainstream feed, it has already been vetted by a thousand echo chambers. To fight this, editors are moving away from manual monitoring and toward fact-checking pipelines-automated systems that use artificial intelligence to spot lies in real time.

A modern pipeline isn't just a single bot; it is a sequence of technical steps that filters noise, identifies suspicious patterns, and flags claims for a human editor to verify. The goal isn't to let the AI decide what is true, but to handle the heavy lifting of scanning millions of messages so humans can focus on the actual journalism.

The Core Components of an AI Fact-Checking Pipeline

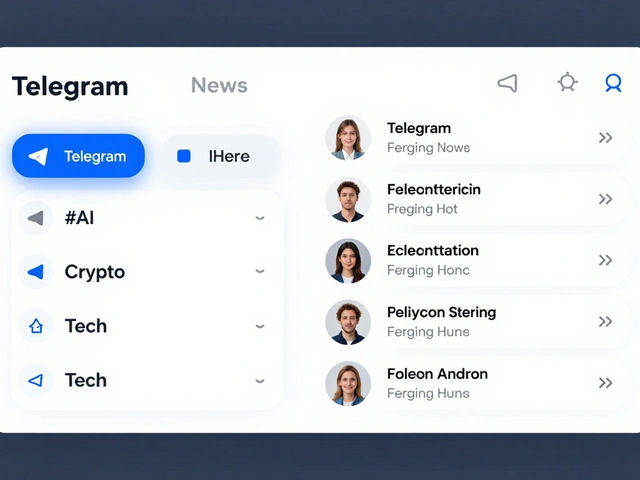

To build a system that actually works, you need more than a simple keyword alert. A professional pipeline usually consists of three distinct layers of detection. First, the system identifies suspicious channels based on behavior patterns. Second, it scans for specific messages that look like disinformation. Third, it groups these messages into "narratives," so editors can see if a single lie is being coordinated across fifty different groups simultaneously.

At the heart of these systems is Natural Language Processing is a field of AI that enables computers to understand, interpret, and manipulate human language. By using NLP, a pipeline can distinguish between a sarcastic comment and a factual claim that requires verification.

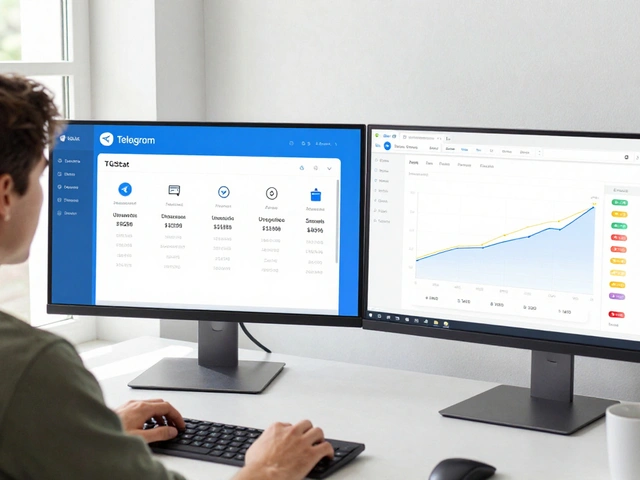

For editors, the practical value is a dashboard that transforms a chaotic stream of Telegram data into a prioritized list of alerts. Instead of scrolling through 500 channels, an editor sees a notification: "High probability of coordinated disinformation regarding election results detected in 12 monitored groups." This allows for a rapid response, potentially debunking a story before it goes viral on other platforms.

How the Technical Workflow Actually Works

If you peek under the hood, the process is a series of data transformations. It starts with raw data extraction from the Telegram API. This data is messy, so it goes through a cleaning phase where bots and system messages are stripped out. From there, the pipeline uses a method called TF-IDF is a statistical measure used to evaluate how important a word is to a document in a collection. This helps the AI identify unique keywords that characterize a specific fake news story.

Once the text is cleaned and vectorized, the system generates "embeddings." Think of embeddings as mathematical maps. If two messages say the same thing but use different words (e.g., "The vaccine is a hoax" vs "The injection is a lie"), the AI recognizes they are conceptually identical. This allows editors to track the evolution of a lie as it changes shape across different channels.

| Technology | Primary Function | Value for Editors |

|---|---|---|

| Embeddings | Semantic matching | Finds similar claims even with different wording |

| TF-IDF | Keyword weighting | Quickly identifies the core topic of a message |

| Classification Models | Labeling (True/False/Unknown) | Automates the first pass of content filtering |

| Real-time Dashboards | Visualization | Provides an overview of disinformation trends |

Real-World Implementations: From Bots to Enterprise Systems

There are different ways to deploy these pipelines depending on the size of the newsroom. Some organizations use lightweight tools like NaradaFakeBuster is a specialized Telegram bot that verifies claims against existing fact-check databases. This is a "pull" system where the user asks the bot to verify a specific phrase.

Larger organizations, such as Newtral is a leading Spanish fact-checking organization that utilizes AI to combat disinformation. They use more robust platforms like FactFlow AI, which is a "push" system. Instead of waiting for a query, FactFlow actively monitors the ecosystem and pushes alerts to editors based on customized priorities.

We are also seeing a shift toward multimodal AI. Tools like Squash is an AI system developed by The Reporters' Lab that fact-checks spoken words in videos in real time. Since Telegram is heavy on video and voice notes, the ability to transcribe audio into text and match it against a database of known lies is becoming a critical requirement for any serious news pipeline.

Avoiding the AI Bias Trap

A major risk with automated pipelines is algorithmic bias. If an AI is trained only on one type of political speech or one specific language, it might over-flag certain groups while letting other lies slide. To prevent this, high-end pipelines implement a "human-in-the-loop" workflow.

In this model, the AI never publishes a "Fake" label on its own. Instead, it acts as a sophisticated filing clerk. It gathers the evidence, finds the original source of the claim, and presents it to a human editor. The editor then makes the final journalistic call. This ensures that the nuances of language and culture are respected, and the news organization maintains its editorial integrity.

Furthermore, the challenge of language remains. While English-language AI is incredibly precise, smaller languages often lack the massive datasets needed to train accurate models. Editors working in non-English regions often have to spend more time "tuning" their models manually to ensure the AI understands local slang and cultural references used in disinformation campaigns.

Practical Steps for Implementing Your Own Pipeline

If you are a news editor looking to integrate AI assistance, don't start by building a neural network from scratch. Start with the data. You need a clean stream of Telegram messages, which usually requires a dedicated bot or a scraping tool that adheres to the platform's terms of service.

Once you have the data, follow these steps to build a basic workflow:

- Define Your Watchlist: Identify the high-risk channels and groups that frequently seed disinformation.

- Set Up a Claim-Matching System: Use a tool or API that can compare new messages against a database of already debunked claims.

- Establish a Triage Process: Create a system where AI flags a message as "High Confidence Fake" or "Suspicious," and route these to specific editors.

- Create a Feedback Loop: When an editor confirms a claim is fake, that data should be fed back into the AI to improve its future detection accuracy.

The ultimate goal is to move from reactive journalism-where you debunk a lie after it has already caused damage-to proactive journalism, where you identify the spark of a disinformation campaign and extinguish it immediately.

Can AI completely replace human fact-checkers?

No. AI is excellent at pattern recognition and speed, but it lacks the critical thinking, ethical judgment, and cultural context that human journalists provide. The most effective pipelines use a "human-in-the-loop" approach where AI filters the noise and humans make the final editorial decision.

How does a pipeline handle encrypted or private Telegram groups?

AI pipelines can only analyze data they have access to. For private groups, organizations typically use "sock puppet" accounts or invited monitors to feed data into the pipeline. However, this must be balanced with ethical considerations and the platform's terms of service.

What is the difference between misinformation and disinformation in AI terms?

While the AI detects the "falseness" of a claim, the distinction lies in the intent. Disinformation is a deliberate attempt to deceive. AI pipelines identify this by looking for coordinated behavior-such as the same message appearing in 20 different groups at the exact same second-which suggests a planned campaign rather than an accidental error.

Is TF-IDF still relevant with the rise of Large Language Models (LLMs)?

Yes. While LLMs are better at understanding meaning, TF-IDF is computationally cheaper and faster for initial filtering and keyword-based grouping. Many pipelines use a hybrid approach: TF-IDF for the first wide-net sweep and LLMs for the deep analysis of a specific claim.

How do these pipelines deal with 'deepfake' audio or video on Telegram?

Advanced pipelines integrate specialized forensic tools that analyze the metadata and artifacts of a file. For audio, they use speech-to-text transcription to run the words through a text-based fact-checker, and for video, they look for visual inconsistencies or use reverse-image search on key frames.