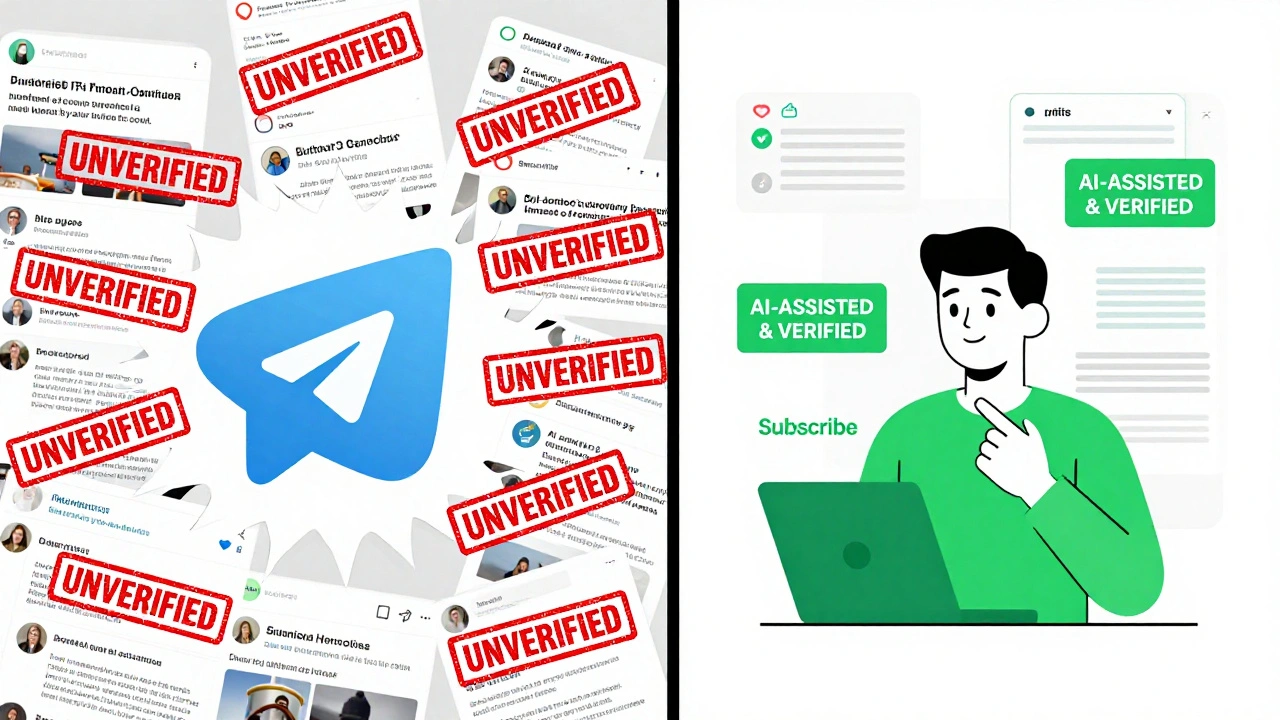

Telegram has become one of the most powerful news distribution platforms in the world, with over 800 million monthly users. But as more news channels turn to generative AI to write headlines, summarize events, and even draft full reports, a quiet crisis is unfolding. Generative AI is speeding up content creation - but at the cost of truth. Without clear rules, misinformation spreads faster than corrections can be made.

Why Telegram News Is Different

Unlike traditional newsrooms with editors, fact-checkers, and legal teams, most Telegram news channels are run by one or two people. Some are hobbyists. Others are small independent outlets with no budget. They use AI tools like ChatGPT or Claude to generate content because it’s fast, cheap, and easy. But here’s the problem: AI doesn’t know if a story is true. It doesn’t care if a quote is real or made up. It just stitches together what it’s seen before. In 2024, a false report claiming German troops had crossed into Ukraine was generated by AI and shared across 173 Telegram channels within hours. No one checked it. No one corrected it until a journalist in Kyiv spotted the error. By then, over 2.3 million people had seen it. That’s not an outlier - it’s becoming the norm.What Ethical AI Looks Like in Practice

There’s no official Telegram policy on AI use. But the world’s top newsrooms - Reuters, BBC, The Guardian - have spent years building ethical AI guidelines. And their rules can and should be adopted by Telegram news channels, even the small ones. Here’s what ethical AI use looks like on the ground:- Human review is mandatory. Every piece of AI-generated content must be checked by a person before it’s posted. Not just a quick scan - a real fact-check. Cross-reference names, dates, quotes with at least three trusted sources.

- Disclose AI use clearly. If AI helped write it, say so. Not buried in a footnote. Not in a tiny font. Put it in the first sentence: “This report was assisted by AI. Human editors verified all facts.”

- Never let AI write breaking news. AI is great for summaries, backgrounders, or repurposing content. But it should never be the first to break a major story. Too many hallucinations. Too many errors.

- Build a simple verification checklist. Even a three-step process helps: 1) AI generates draft. 2) Human checks sources. 3) Label as AI-assisted. Done.

These aren’t fancy rules. They’re basic journalism. But on Telegram, they’re revolutionary.

The Cost of Not Following Guidelines

Ignoring ethics isn’t just risky - it’s self-destructive. A YouGov survey of 5,000 Telegram news readers in early 2024 found that 89% said they’d stop following a channel if they learned it used AI without disclosure. On Reddit’s r/Telegram, users are already unsubscribing in droves after discovering AI-generated content. One user wrote: “I trusted this channel for two years. Found out they used AI. Unsubscribed. No second chances.” Trust is fragile. It takes years to build. One AI-generated lie can erase it all. And it’s not just about trust. The EU’s AI Act, which took effect in February 2025, now requires all AI-generated content to be clearly labeled. Telegram channels operating in Europe could face fines or restrictions if they don’t comply. This isn’t a future threat - it’s happening now.

How Small Channels Can Start Today

You don’t need a team of five to do this right. Here’s how to begin, even if you’re solo:- Use free tools. Try FactCheck.org’s AI detector or the open-source tool Hugging Face’s AI text classifier. They’re not perfect, but they flag suspicious content.

- Build a source library. Keep a list of 5-10 trusted outlets you trust: BBC, AP, Reuters, local newspapers. Always cross-check against them.

- Use templates. Create a simple label: “AI-assisted report - verified by [Your Name].” Copy-paste it to the top of every post.

- Start slow. Use AI only for summaries, not breaking news. Save AI for rewriting press releases or turning long reports into digestible posts.

One Telegram news channel in Poland started using this system in January 2024. Their subscriber count grew 22% in three months. Why? Because people started saying, “I know this is real.”

What’s Coming Next

The pressure is mounting. The Journalism Trust Initiative is rolling out mandatory AI transparency scoring for all news channels by January 2025. Major platforms like WhatsApp Channels already require AI labeling. Signal bans AI-generated news entirely. Telegram hasn’t acted yet. But regulators are watching. The UN called Telegram “the highest-risk platform for AI-enabled information disorder.” The European Commission has issued formal guidance demanding disclosure and human accountability by Q3 2024. Channels that ignore this won’t just lose trust - they’ll lose access. Future updates could restrict AI-generated content from being shared or amplified on the platform.

It’s Not About Stopping AI. It’s About Using It Right.

AI isn’t the enemy. It’s a tool. Used well, it can help you reach more people, summarize complex stories, and free up time for real reporting. Used badly, it turns your channel into a misinformation engine. The difference isn’t technology. It’s intention. If you’re running a Telegram news channel, ask yourself: Do I want to be trusted? Or do I want to be fast? You can’t have both unless you do the work.Is it legal to use AI to write news on Telegram?

Yes, it’s legal - but only if you disclose it. Starting in 2025, the EU’s AI Act and similar laws in other countries require clear labeling of AI-generated content. Failing to do so could result in fines or platform restrictions. The law isn’t banning AI - it’s banning deception.

Can AI detect AI-generated news on Telegram?

Some tools can flag likely AI content, but they’re not foolproof. AI detectors have error rates between 15-30%, and they often mislabel human writing as AI. That’s why human verification matters more than any tool. Don’t rely on detection - rely on transparency.

What percentage of Telegram news channels use AI?

About 35% of active Telegram news channels use some form of AI assistance, according to Sensor Tower’s 2024 analysis. But only 12% disclose it. That means most users don’t know they’re reading AI-generated content.

Can AI make news faster without hurting quality?

Yes - but only with human oversight. AI can summarize reports, translate content, or reformat text for mobile. But it can’t verify facts, understand context, or judge bias. Use AI to handle the boring parts, not the important ones.

What should I do if I find AI-generated misinformation on Telegram?

Don’t just report it - correct it. Reply to the post with verified facts and link to trusted sources. If you run a channel, share the correction. Silence lets falsehoods spread. Truth needs to be louder than error.