Telegram has over 800 million users, but it doesn’t check if what you’re reading is true. No fact-checkers. No algorithm flags. No official verification system. That means if someone sends a fake price drop for Bitcoin, a false political rumor, or a scammy investment pitch in a group chat, it spreads fast - and often stays unchallenged. But here’s the thing: community peer review is already working in some places on Telegram. Not because the app asked for it, but because users built it themselves.

Why Telegram Needs Peer Review

Telegram doesn’t have built-in tools to fight misinformation. Unlike Facebook or Twitter, it doesn’t label false claims or show warnings. It doesn’t even check if a message has been forwarded 100 times. The platform relies on users to report content - and even then, only if it’s spam, ads, or illegal links. Accuracy? That’s on you. This creates a gap. In crypto groups, political forums, or local news channels, false information spreads faster than corrections. A 2023 study from TokenMinds found that 78% of Web3 projects use Telegram as their main community hub. If even 10% of those groups are spreading bad info, that’s tens of millions of people getting misled. And since Telegram’s encryption only applies to Secret Chats, regular group messages are visible to the platform and potentially to hackers or bots. The problem isn’t just bad actors. It’s also the lack of structure. In a group of 50,000 people, who decides what’s true? One admin? A volunteer? No one? Without a system, truth becomes a popularity contest - the loudest voice wins.How Peer Review Works on Telegram (Real Examples)

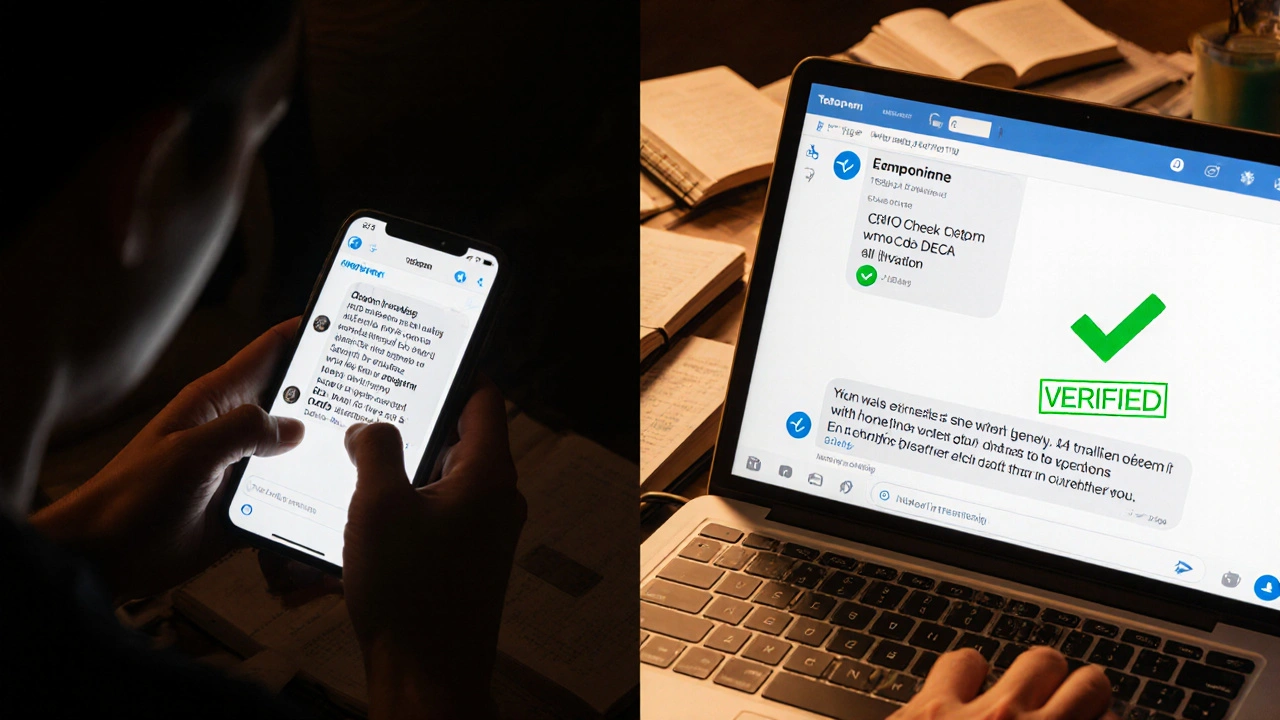

Some communities figured this out. They didn’t wait for Telegram to fix it. They built their own. Take a Bitcoin group with 15,000 members. They created a simple three-step rule: any message claiming a price change or new token launch must be reviewed by at least two trusted members before being reposted. These trusted members aren’t admins - they’re regular users who’ve earned respect by consistently citing sources, correcting mistakes, and staying calm during hype cycles. If someone posts “ETH will hit $10,000 tomorrow!” without a link to a credible source, it gets deleted. If they reply with a CoinDesk article and a timestamp, it gets approved and pinned. They tracked results. Over six months, misinformation dropped by an estimated 65%. That’s not magic. That’s structure. Another group, focused on public health updates, uses a dedicated verification channel. When someone shares a claim - say, “New drug cures diabetes” - they copy-paste it into the verification channel. Three volunteers check it against WHO, CDC, or peer-reviewed journals. If it’s false, they reply with “FALSE - source: [link]” and delete the original post. If it’s true, they reply “VERIFIED” and share the original message with a green checkmark emoji. Members learn fast. After a few weeks, people stop posting unverified claims altogether. These aren’t tech giants. They’re just groups of people who got tired of being lied to.How to Set Up Your Own Peer Review System

You don’t need a team of engineers. You don’t need a budget. You need three things: rules, roles, and repetition.- Define what counts as misinformation. Be specific. Don’t say “no fake news.” Say: “No price predictions without a source from CoinMarketCap, CoinGecko, or official project announcement.” “No health claims without a link to PubMed or a government health site.”

- Create a verification team. Pick 3-5 active, calm, and reliable members. Not admins. Not the loudest. The ones who always cite sources. Give them a special role - maybe “Fact Checker” - and let them delete or flag posts. Rotate them monthly so no one feels like they’re in charge forever.

- Use a separate verification channel. Make a hidden group or channel called “#verify-here.” Members who want to post a claim first send it there. The team reviews it. Then they reply with “VERIFIED” or “FALSE - source: [link].” Only approved posts get shared in the main group.

- Use Telegram’s permission settings. Set your group to “Restricted.” Only admins can send messages. Everyone else can only comment. This stops spam and lets your team control the flow.

- Automate with bots. There are free bots like FactCheckBot that can auto-reply to keywords like “buy now” or “guaranteed returns” with a warning. Set it up in 10 minutes. It won’t catch everything, but it catches the low-hanging fruit.

What Doesn’t Work

Not every group can do this. And some tried and failed. In political groups, peer review often collapses into bias. One side calls something “fake news.” The other side calls it “censored truth.” No one agrees on sources. The result? Everyone leaves. Or worse - the group splits into two, each with its own version of reality. Also, don’t rely on upvotes or likes. Telegram doesn’t have them. You can’t measure truth by how many people react with a fire emoji. Truth needs verification, not popularity. And don’t give too much power to one person. If the admin is the only one who decides what’s true, people lose trust. They’ll whisper in DMs. They’ll start new groups. The system fails because it’s not fair.The Hidden Risks

Peer review sounds great - until you realize how fragile it is. Telegram collects metadata: your IP address, device info, even your username history. If someone in your verification team is compromised - say, by a government or a hacker - they could track who’s flagging what. That’s a real danger in countries with strict censorship. Also, Telegram’s servers aren’t open source. You can’t check how their moderation tools really work. If they suddenly start deleting posts from your verification channel without warning, you’re out of luck. And then there’s the time cost. Setting up a system takes 10-15 hours. Maintaining it takes 2-3 hours a week. That’s a lot for volunteers. Most groups burn out after three months.