The way journalists talk to informants has fundamentally shifted in the last decade. We used to rely heavily on landlines, secure email, and in-person meetings. Now, the primary bridge between reporters and sensitive information sits inside a chat application.

Telegram is a cloud-based instant messaging software that prioritizes speed, security, and scalability. It was created by brothers Nikolai and Pavel Durov and has become a preferred tool for activists, whistleblowers, and journalists operating in high-risk environments. As we move through 2026, its influence extends far beyond simple messaging; it dictates how access is granted and maintained.

Key Takeaways

- Source Relationships are now often mediated through encrypted digital channels rather than physical contact.

- Telegram's Secret Chat feature uses end-to-end encryption to protect sensitive leaks.

- Public channels allow rapid distribution, but verification becomes harder without face-to-face validation.

- Journalists must adapt traditional ethics protocols to accommodate asynchronous, app-based workflows.

- Digital footprints persist even when users think they are being anonymous.

The Architecture of Trust in Messaging Apps

When you open a conversation with a source on this platform, you are stepping into a different security environment than email or standard SMS. Unlike many competitors, Telegram offers two distinct modes of communication. Standard chats are server-side encrypted, meaning Telegram holds the keys. These are convenient for group coordination but carry inherent risk for highly sensitive material.

However, the platform introduced a feature called Secret Chats, which changes the dynamic entirely. These utilize end-to-end encryption, ensuring that only the sender and receiver can read the message content. For a journalist protecting a whistleblower, this capability is the bedrock of trust. It reduces the fear of surveillance interception. If a source knows the provider cannot see their words, they are more likely to share unredacted documents or sensitive details.

This creates a unique psychological contract. A source might hesitate to call a hotline or send a hard drive via courier. But sending a photo of a document through a disappearing message window feels lower risk. That perceived safety directly influences the quality and quantity of access a reporter receives. However, this reliance shifts the burden of security from the newsroom’s infrastructure to the individual user's device settings.

Anonymity Versus Accountability

In 2026, anonymity is both a shield and a double-edged sword. Telegram allows users to create usernames without linking a phone number to every contact point in public spaces. This enables whistleblowers to bypass gatekeepers within organizations. They can upload files to a public channel or send them privately without revealing their identity immediately.

Digital Anonymity refers to the ability of users to conceal their real-world identity while interacting online. This concept is critical for sources in authoritarian regimes where speaking out could lead to arrest or worse. By leveraging usernames, sources can maintain a separation between their daily lives and their leak activities. Yet, this same anonymity complicates verification.A major challenge arises here: verifying who is really on the other end. In traditional reporting, you meet the person. You look at their ID badge. You confirm their position. On an encrypted app, a bad actor or a disinformation campaign can easily pose as a legitimate insider. The friction required to confirm identity without breaking confidentiality is significant. Reporters often request secondary proofs-metadata, original file properties, or video verification-that take time and technical skill to validate.

Navigating Community Norms and Group Dynamics

Beyond one-on-one chats, large parts of the ecosystem operate through communities. Channels function differently than private groups. They are typically one-way broadcast tools where admins post content and subscribers receive it. For news gathering, this is a goldmine for monitoring grassroots movements.

A single channel dedicated to a specific sector, such as local government procurement, might reveal corruption before official reports exist. Researchers analyzing millions of messages found that community norms significantly influence behavior. Toxicity rises and falls based on group culture. A reporter embedded in such a group learns to spot patterns. If a sudden influx of identical phrases appears, it suggests bot activity. Real human sources often leave linguistic fingerprints that algorithms miss.

This collective intelligence helps journalists identify emerging stories. But it creates a dependency. If the admin bans a reporter, access vanishes instantly. The relationship exists entirely at the whim of the channel operator. There is no institutional backing. This makes source retention fragile compared to long-term professional networks built over years of physical interaction.

Risks of Digital Permanence

We must discuss the illusion of deletion. Many users assume that self-destructing messages are gone forever. While the timer deletes the message from the visible chat history, backups remain a concern. Cloud storage on smartphones often syncs photos and attachments before the timer runs out. If a phone is seized, forensic tools can sometimes recover deleted data depending on the operating system version.

Journalists advising sources need to understand these technical realities. Telling a source to delete a file isn't enough; they must understand that metadata-timestamps, location, file hash values-can still survive. In court proceedings, these digital artifacts have been used to trace leaks. Therefore, the relationship requires ongoing education. A journalist isn't just interviewing; they are consulting on digital hygiene. This advisory role deepens the bond but adds layers of responsibility regarding liability.

Ethical Guidelines for 2026 Reporting

News organizations have had to update their standards to match this technology. Old rules about "protecting the source" focused on record locks and safe drop boxes. New guidelines address account management.

- Using burner accounts separate from personal identifiers.

- Never allowing the app to index contacts automatically.

- Establishing kill-switches where communication stops abruptly if compromised.

- Avoiding voice notes if biometric voice recognition poses a threat.

Adopting these rules creates a barrier to entry for casual tips but filters for serious informants. It signals to the source that the reporter takes security seriously. This shared commitment to operational security strengthens the professional bond.

Comparison: Traditional vs. App-Based Sourcing

| Feature | Traditional (Phone/Email) | Telegram / Secure Messaging |

|---|---|---|

| Speed | Moderate (Hours/Days) | Instant (Seconds) |

| Anonymity | Low (ISP Records, Phone Logs) | High (Username Based, No Phone Required) |

| Encryption | Varies (TLS/SSL mostly) | End-to-End (Secret Chats) |

| Verification | Easier (Physical Meeting) | Difficult (Requires Digital Forensics) |

| File Transfer | Limited Size | Large Files Supported |

Managing the Human Element

Technology solves logistics, but it doesn't solve trust completely. A key part of maintaining source relationships involves understanding human vulnerability. When communication is reduced to text, tone is lost. Misunderstandings happen faster. A sarcastic joke can look like a demand. A delayed response can seem like rejection.

Journalists often find themselves needing to be more proactive in reassuring sources through the screen. Simple text updates, acknowledging receipt without judgment, and respecting time zones become crucial. The frictionless nature of typing can make people overshare or under-clarify. Building rapport requires intentional pacing.

Furthermore, the pressure of immediacy is real. In a chat bubble, silence speaks loudly. Sources expect responses quickly because the medium promises it. Newsrooms must manage internal expectations so editors don't push reporters to force connections. Maintaining ethical distance is harder when a notification sounds every five minutes.

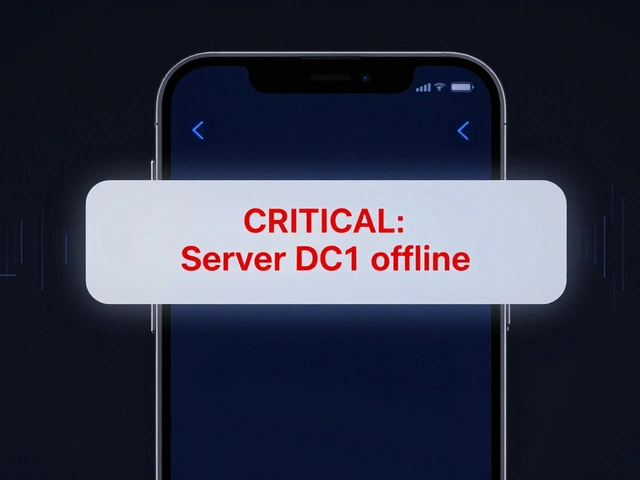

Conclusion on Platform Dependence

We must acknowledge that relying on a third-party platform introduces systemic risk. If the service shuts down or complies with subpoenas in certain jurisdictions, source lists could theoretically be exposed depending on legal leverage. Diversification is necessary.

Smart reporters do not put all eggs in one basket. They cross-reference secure messaging apps, use Signal for calls, and keep hard copies where possible. The goal is redundancy. Even though Telegram has become a staple in 2026 media toolkits, treating it as the sole lifeline creates a dangerous bottleneck. Sustainable relationships require a mix of digital convenience and analog caution.

Frequently Asked Questions

Is Telegram truly safe for whistleblowers?

It depends on usage. Standard chats are not fully secure against the provider. You must enable Secret Chats for true end-to-end encryption. Additionally, your phone's own security matters as much as the app's.

Can Telegram track my conversations?

For cloud chats, yes, they hold the encryption keys. For Secret Chats, they cannot technically read the content due to zero-knowledge architecture. However, metadata regarding contact frequency remains visible.

What is the best way to verify an anonymous source?

Request multiple forms of proof without asking for names. Look for consistent file formats, ask for specific details not yet public, and corroborate with independent records. Do not rely on visual identification alone.

Do disappearing messages guarantee privacy?

No. Screenshots can be taken before the timer expires. Backups may retain images temporarily. Assume anything sent digitally can potentially be recovered by sophisticated adversaries.

How do newsrooms handle Telegram compliance risks?

Organizations usually require dual-device verification and prohibit storing sensitive chats on work-owned devices to prevent subpoena exposure. Personal accounts managed strictly for source protection are common practice.