Most people assume that any app without a strict moderation team is basically a wasteland of fake news. When it comes to Telegram is a cloud-based instant messaging service that emphasizes encrypted communication and large-scale broadcasting through channels. Because it doesn't police content the way a giant like Meta does, it's often painted as the ultimate hub for misinformation. But is it actually a free-for-all, or is the truth more complicated?

If you've spent any time in large public channels, you know the vibe is different from a curated Facebook feed. There are no algorithms pushing "suggested" content into your face based on your data profile. You see what the admin posts, and that's it. This structural difference changes everything about how Telegram misinformation spreads compared to mainstream platforms.

The Myth of the Misinformation Saturation

There is a common belief that Telegram is completely saturated with lies. However, a study of over 200,000 posts tells a different story. While links to known misinformation sources are shared about four times more often than professional news, the fake stuff isn't actually everywhere. It's mostly confined to a few specific channels.

Surprisingly, professional media organizations still compete effectively for audiences on the platform. This suggests that simply removing moderation doesn't automatically make fake news the winner. On mainstream platforms, visibility is often managed by a secret sauce of algorithms. On Telegram, visibility depends on whether a user actually chooses to subscribe to a channel. The audience for misinformation tends to be a small, highly active community rather than the general public.

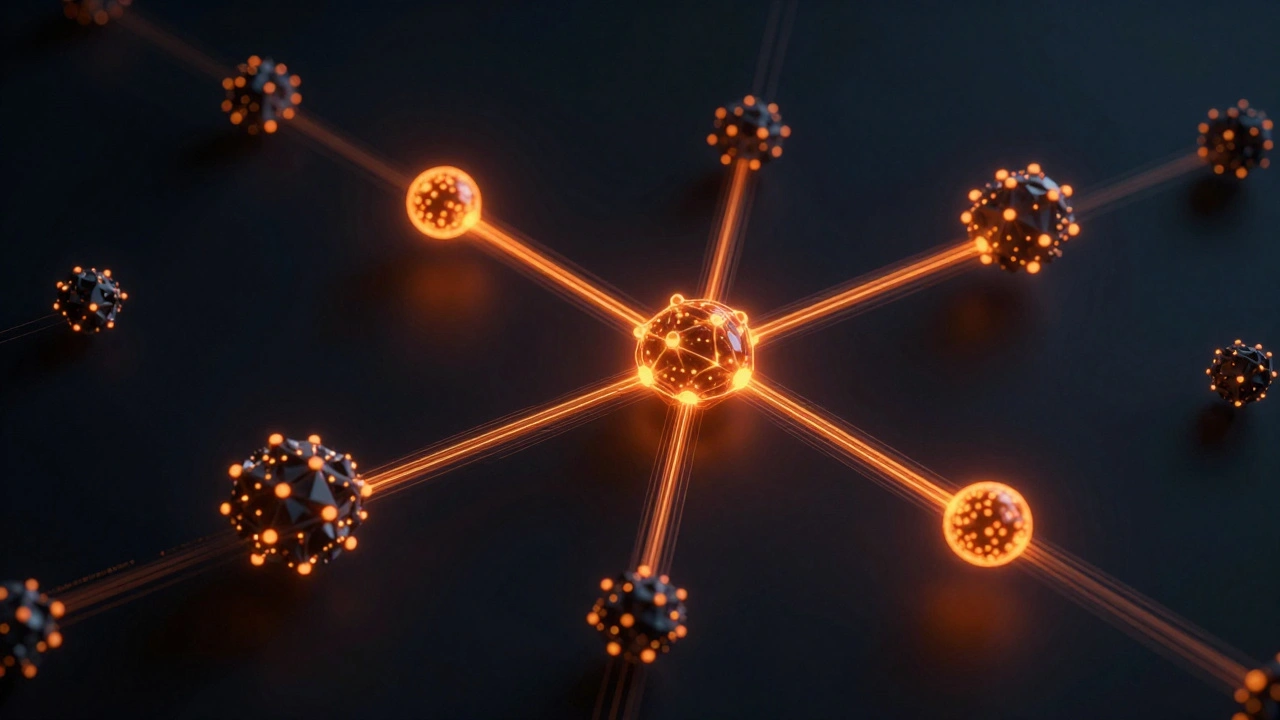

How Content Spreads: Bridge Nodes vs. Algorithmic Feeds

Mainstream platforms use centralized systems to flag and demote content. Telegram doesn't really do that. Instead, misinformation moves through a network of "bridge nodes." These are high-impact channels that connect otherwise isolated groups, acting as a gateway that pushes a specific narrative from one niche community to another.

Researchers analyzed 1,740 channels and found that just a handful of these bridge nodes-some as few as 12 in specific datasets-were responsible for the bulk of cross-community misinformation flows. This means the "problem" isn't the millions of users, but a few strategic points in the network. While a mainstream platform might try to shadowban a keyword across the whole site, a more effective strategy for Telegram is identifying and monitoring these specific connectors.

| Feature | Mainstream Platforms (e.g., Meta, X) | Telegram |

|---|---|---|

| Moderation Style | Centralized, proactive, algorithmic flagging | Reactive, minimal, mostly report-based |

| Content Discovery | Algorithmic recommendations (Feed) | Subscription-based (Channels/Groups) |

| Propagation Path | Viral trends via algorithmic amplification | Cross-community bridge nodes |

| Transparency | Public feeds, accessible to researchers | High use of encrypted/private groups |

The Technical Wall: Encryption and Privacy

One of the biggest headaches for anyone trying to stop fake news is End-to-End Encryption is a system of communication where only the communicating users can read the messages, preventing third-party access. While Telegram is primarily a cloud-based service, its semi-private nature and private groups create echo chambers that are essentially invisible to outside observers.

Mainstream platforms have the advantage of seeing almost everything that happens in a public space. Telegram, however, allows for small, tight-knit groups that function as dark corners of the internet. The only real tools Telegram provides are email-based reporting systems (like [email protected]), which are a drop in the bucket compared to the AI-driven moderation suites used by other tech giants.

New Weapons in the Fight: AI and Community Action

Since the "top-down" approach doesn't work well here, new methods are emerging. Researchers from Bochum and Lausanne developed a Propaganda Detection Mechanism which is an automated tool designed to identify patterns of state-sponsored or malicious disinformation specifically within Telegram's architecture. This tool is faster and cheaper than hiring humans to read every single post, filling a huge gap in how we track propaganda.

Beyond the tech, there's the human element. CheckMate is a volunteer-driven initiative that provides on-demand fact-checking for messages forwarded from apps like WhatsApp and Telegram. Instead of waiting for a corporation to label a post as "misleading," users send a screenshot to a volunteer team. These volunteers vote on the accuracy and send a response back quickly. CheckMate even uses natural language processing to speed up these replies, creating a hybrid system that's way more agile than a corporate moderation board.

The Blind Spot: What We Don't Know

We have to be honest about the data: most of what we know about Telegram comes from public channels. Private groups-where a massive amount of user engagement happens-remain a black box. For example, research into COVID-19 misinformation had to build custom collection methods just to peek into specific groups.

This means the actual scale of the problem could be larger than the data suggests, or it could be even more concentrated. Until we have better ways to analyze private data without violating user privacy, we're only seeing a fraction of the map.

Practical Takeaways for Navigating the Noise

If you're managing a community or just trying to stay informed, don't assume every unmoderated space is a lie. But do stay skeptical. The key is to understand that on Telegram, you aren't being fed content by an AI; you are choosing your sources. If you follow a "bridge node" that frequently links to obscure, non-credible sites, you're effectively opting into a misinformation stream.

The most effective way to fight the spread isn't by asking the platform to censor everything-which they likely won't do-but by supporting community-led verification projects and using automated detection tools when possible.

Does Telegram have any moderation at all?

Yes, but it's very limited. They primarily rely on user reports sent via email to their abuse and DMCA addresses. They will take down content that is clearly illegal, but they don't have the massive, proactive moderation teams that you'll find at Facebook or X.

Why is misinformation easier to spread on Telegram?

It's not necessarily "easier" to spread, but it's harder to stop. The lack of centralized moderation and the use of private groups allow misinformation to live in echo chambers without any opposing viewpoints to challenge the narrative.

What are "bridge nodes" in the context of Telegram?

Bridge nodes are specific channels that act as connectors between different isolated communities. They take a piece of misinformation from one niche group and broadcast it to several other groups, effectively acting as a super-spreader for fake news.

Is CheckMate a tool I can use?

CheckMate is a community-run initiative where you can forward suspicious images or messages to their WhatsApp number. Volunteers and AI then help verify if the information is true or false, providing a faster alternative to official fact-checking sites.

Can professional news compete on Telegram?

Surprisingly, yes. Research shows that leading media organizations are often able to compete for wider audiences and even "win" against misleading sources, proving that a lack of moderation doesn't automatically mean the truth is drowned out.

Next Steps for Users and Admins

If you are a channel admin, consider implementing your own verification markers or partnering with community fact-checkers to keep your audience informed. For the average user, the best defense is a bit of manual effort: when you see a shocking claim, check if it's being reported by multiple independent sources before hitting the forward button.