Running a news operation on Telegram isn’t like posting on Twitter or Facebook. With over 800 million monthly users and 150 million messages processed every minute, Telegram moves at lightning speed. If your AI vendor can’t keep up-or worse, gets it wrong-you could spread false casualty numbers during a war, mistranslate a political statement, or trigger a legal fine in the EU. The stakes are real. And most vendors selling generic AI tools are completely unprepared for this.

Why Generic AI Vendors Fail on Telegram

You might be tempted to use a cheap AI tool that works fine for generating blog posts or social media captions. But news on Telegram demands precision. A vendor that uses a single LLM prompt to summarize, translate, and verify content is flying blind. These systems don’t understand context. They can’t tell the difference between a verified AFP report and a rumor posted in a Telegram group with 200 followers. In December 2025, one popular budget vendor, QuickNewsAI, falsely reported 120 deaths in a Ukraine border clash. The number was made up. The channel was suspended. The organization lost trust overnight.Top-performing vendors use multi-agent systems. That means separate AI modules, each trained for one job: one for fact-checking, one for translation, one for compliance, one for summarization. These agents talk to each other. If the fact-checker flags a claim as unverified, the summarizer doesn’t publish it. If the translation agent detects a culturally loaded term, it flags it for human review. This isn’t just better-it’s necessary.

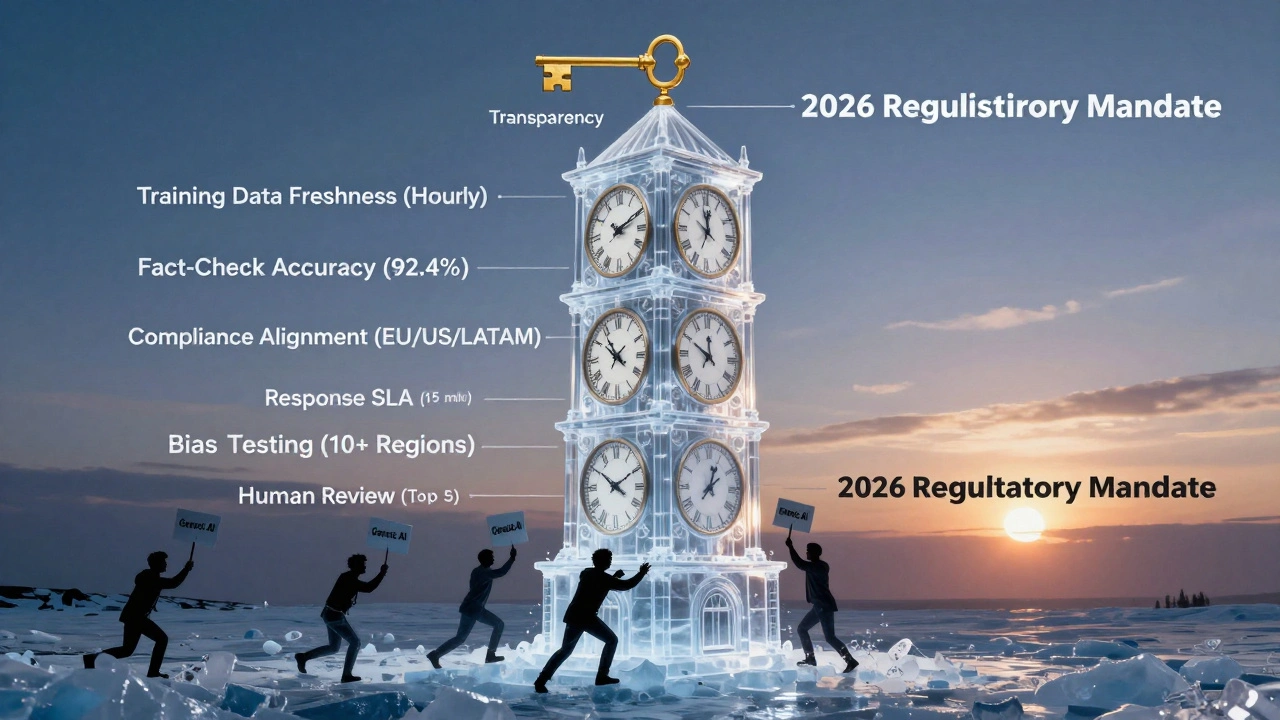

Must-Have Technical Specs for Telegram AI Vendors

Don’t accept vague promises. Ask for hard numbers. Here’s what you need to see in writing:- Telegram Bot API 7.4 compatibility with webhook latency under 200ms for 95% of requests during peak traffic (10,000+ concurrent users).

- Processing capacity of at least 500 news items per minute without dropping messages.

- Fact-checking accuracy of 92.4% or higher, verified using the Media Trust Index benchmarks.

- Translation quality with BLEU scores above 45 for English, Spanish, Arabic, Russian, and Chinese.

- Summarization quality with ROUGE-L scores above 0.78.

- Hallucination rate below 1.2%, measured using the NewsGuard AI Integrity Framework v3.1.

- Media support for images up to 20MB and videos up to 2GB, with integration into Telegram’s Instant View.

If a vendor can’t provide this data, walk away. There’s no excuse in 2026. Leading vendors like NewsAI Pro don’t just claim these specs-they publish third-party audit reports from PwC Media Practice showing they meet them daily.

Training Data Age Matters More Than You Think

A vendor’s training data isn’t just background info-it’s the foundation of your credibility. The best news AI vendors update their models hourly. They ingest verified reports from Reuters, AFP, AP, and trusted local outlets in real time. This lets them catch breaking developments as they happen and correct errors within minutes.Compare that to budget vendors. Some still train on data frozen as far back as October 2021. That means their AI doesn’t know about the 2024 U.S. Algorithmic Accountability Act, the 2025 EU AI Act, or how the war in Gaza changed media reporting norms. If your AI can’t recognize that “militia” in one region means “freedom fighters” in another, you’re not just biased-you’re dangerous.

Ask: “When was your last training data refresh? Can I see the audit trail?” If they hesitate, they’re hiding something.

Regulatory Compliance Isn’t Optional-It’s Legal Armor

The EU AI Act, the US Algorithmic Accountability Act, and similar laws in 47 countries now hold news organizations directly liable for AI-generated content. If your bot spreads misinformation, you can be sued. Fines can reach millions. That’s why compliance features aren’t a bonus-they’re your insurance policy.Ask these four questions:

- Do you maintain complete data ownership of our news content and sources? (89% of failing vendors can’t answer this clearly.)

- Can you explain, in detail, why your system moderated a specific post? (Required under EU Digital Services Act Article 14.)

- What bias testing have you done across geopolitical perspectives-especially for conflict zones like Ukraine, Sudan, or Israel/Palestine?

- Who is personally accountable if your model generates false information during a breaking news event?

Dr. Fatima Nkosi of the European Journalism Centre says news orgs using vendors without verified bias testing have a 92% chance of facing defamation claims within 18 months. That’s not a risk. That’s a lawsuit waiting to happen.

Real-World Vendor Comparison: NewsAI Pro vs. Generic Tools

Here’s what you’re really choosing between:| Feature | NewsAI Pro | Generic AI Tools (e.g., ContentGenius) |

|---|---|---|

| Architecture | Multi-agent system (6 specialized modules) | Single LLM prompt |

| Fact-checking accuracy | 87% | 63% |

| Training data freshness | Updated hourly | Often outdated (2021-2023) |

| Language support | 50+ languages, BLEU >45 | 10-15 languages, BLEU <35 |

| Regulatory compliance | Auto-adjusts for EU, US, LATAM, MENA rules | No region-specific compliance |

| Response time for critical errors | 15-minute SLA | 4+ hours |

| Monthly cost (10k-50k subscribers) | $1,200 | $300-$500 |

Yes, NewsAI Pro costs more. But in 2025, Reuters Institute found that organizations using generic tools had 3.7x more content integrity failures. The cheaper option isn’t cheaper-it’s costlier in reputation, legal fees, and lost readership.

Human-in-the-Loop: The Only Safe Way to Scale

Even the best AI can’t replace human judgment. The most successful newsrooms using Telegram AI don’t automate everything-they automate the heavy lifting. The AI scans incoming messages, flags potential issues, translates headlines, and drafts summaries. Then, a human editor reviews the top 5-10 most critical items before publishing.The Associated Press found this approach reduced errors by 78%. It also gave journalists back control. No more panic when the AI mislabels a protest as a riot. No more confusion when a translated quote loses its tone. Humans handle nuance. AI handles volume.

Ask vendors: “Do you support human-in-the-loop workflows? Can we set up approval tiers for breaking news?” If they say no, they’re not built for journalism.

Implementation: What to Expect in 8-12 Weeks

Don’t expect to plug in an AI tool and go live next week. Successful deployments take 8-12 weeks. Here’s the timeline:- Weeks 1-2: Integration with Telegram Bot API. Requires certified developers familiar with webhook handling and message formatting (MarkdownV2/HTML).

- Weeks 3-5: Training the AI on your organization’s style guide, past reporting errors, and preferred sources.

- Weeks 6-8: Testing with real-time news feeds. Simulate breaking events. See how it handles conflicting reports.

- Weeks 9-12: Human review protocol setup, compliance checks, and staff training.

Top vendors provide 200+ page implementation guides with real examples: “How to configure AI for election coverage in Brazil,” “How to handle misinformation during a natural disaster in Indonesia.” Generic vendors give you a 10-page PDF that says “just connect your bot.”

The Future Is Transparent-and Audited

As of January 1, 2026, the European Commission requires all AI vendors serving news organizations to register in a public transparency portal. This includes details on training data sources, bias testing methods, and accountability structures. If a vendor isn’t registered, they’re not compliant-and you shouldn’t use them.By 2027, 78% of global regulators plan to require third-party audits for news AI tools. The vendors who survive will be the ones who welcome scrutiny. Look for vendors who publish their audit results openly. Ask: “Can I see your last independent bias and hallucination report?” If they say no, they’re not ready for prime time.

The best AI vendor isn’t the one with the fanciest demo. It’s the one that treats journalism like a public trust-not a product to be sold.

What’s the biggest mistake newsrooms make when choosing an AI vendor for Telegram?

They pick based on price or ease of setup instead of accuracy, compliance, and transparency. Cheap AI tools often use outdated data, lack multi-agent systems, and can’t handle regional regulations. One false report during a crisis can destroy years of trust. The cost of a bad vendor isn’t just financial-it’s reputational and legal.

Can I build my own AI system for Telegram news instead of buying one?

Some major outlets like Reuters and Bloomberg do. But it requires a team of 5-8 specialists: AI engineers, journalists trained in ethics, compliance lawyers, and Telegram API experts. Most small and mid-sized newsrooms can’t afford the time or cost-around $500,000+ annually. Buying from a specialized vendor is faster, safer, and more scalable.

How do I know if an AI vendor is truly bias-free?

Ask for their bias testing protocol. Top vendors test across 10+ geopolitical perspectives using datasets from conflict zones, elections, and protests. They don’t just say “we’re neutral”-they show you the results. MIT’s 2025 study found 81% of tested vendors had clear regional bias in conflict reporting. Demand proof, not promises.

Do I need to host the AI on my own servers?

For maximum control and compliance, yes. 91% of successful implementations use private cloud or on-premises models. This lets you fine-tune the AI to your audience’s language, cultural context, and legal requirements. Public cloud AI vendors often store your data overseas, which can violate privacy laws in the EU, Canada, or Brazil.

What happens if the AI makes a mistake after deployment?

The best vendors have a 15-minute SLA for critical errors-meaning they fix accuracy failures during breaking news within 15 minutes. They also provide a public correction log and update training data within hours. If your vendor can’t do that, you’re exposed. Mistakes on Telegram spread fast. Your response speed determines your credibility.

Is AI for Telegram news worth the investment?

If you’re serious about reaching global audiences on Telegram, yes. News organizations using specialized AI vendors report 40% faster publishing times, 60% fewer moderation errors, and 30% higher audience retention. The cost is a fraction of what you’d spend on a team of 10 translators and fact-checkers. The real question isn’t whether it’s worth it-it’s whether you can afford not to.