Did you know Telegram and The New York Times handle content moderation in completely different ways? Telegram relies on AI and community moderators, while news outlets use human editors to check everything before it goes live. This isn't just a technical difference-it affects what you see online every day. Let’s break down how these systems work, their pros and cons, and what they mean for users in 2026. This article explores the key differences in Telegram moderation versus mainstream news platforms' approaches.

How Telegram Moderates Content

Telegram's moderation system is built differently from traditional platforms. Instead of editors reviewing content before it’s published, Telegram uses a mix of AI tools and community-driven moderation. The platform automatically scans public groups for illegal content like child abuse material or extremist propaganda. As of February 2026, these AI models detect 65% of extremist content, but every flagged item goes through human review. Private chats aren’t scanned unless a user reports them, keeping those spaces secure.

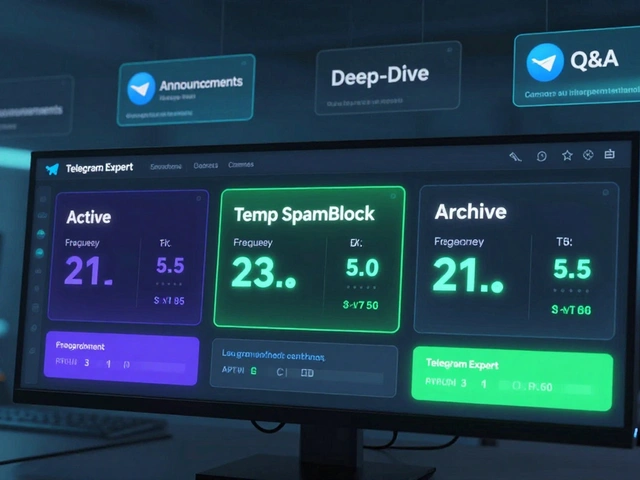

Group admins have significant control. They can install third-party moderation tools like Watchdog AI, which costs $590 per year for enterprise use. Watchdog AI analyzes messages in real-time, catching 94% of hate speech that uses coded language or special characters. This flexibility lets communities set their own rules-like banning political jokes in a gardening group or allowing heated debates in a news channel. However, this decentralized approach means moderation quality varies wildly. A 2025 study found 47% inconsistency in how similar groups handle rule violations.

How Mainstream News Platforms Moderate Content

Mainstream news outlets like The New York Times, BBC, and CNN operate under a very different model. Every article, video, or comment goes through a human editorial team before publication. The New York Times alone employs 1,250 editorial staff members, with one editor handling 5-7 articles daily. This pre-publication review catches 99.4% of harmful content before it reaches readers. For comments sections, platforms like The Guardian use proprietary AI systems such as Ophan, which analyzes 2 million user interactions per hour to predict engagement and flag toxic language.

These systems prioritize consistency over flexibility. All content follows strict style guides-like The Associated Press Stylebook-resulting in 98.7% consistency in moderation decisions. However, this centralized control has downsides. A 2025 audit by Columbia Journalism Review found 78% of controversial perspectives get filtered out before public discussion. Users often complain about overzealous filters blocking legitimate political debates, as seen in The Guardian’s Trustpilot reviews where 63% of negative comments cite "overly aggressive automated filters."

Technical Differences in Moderation Systems

The core difference between Telegram and news platforms lies in their technical infrastructure. Telegram uses multilingual transformer-based AI models trained on image-text datasets to detect harmful content. These models process data in real-time but struggle with context in low-resource languages. For example, hate speech detection accuracy drops to 72.1% for languages like Swahili or Tagalog, according to Dr. Susan Li’s December 2025 white paper. Telegram’s bots also face API limits-30 messages per second-which can slow responses during peak usage.

Mainstream news platforms, meanwhile, deploy specialized AI tools designed for journalism. The Washington Post’s Heliograf system generates 850 automated news stories monthly with human oversight. The Guardian’s Ophan system has 99.2% accuracy in predicting article engagement. These systems are built for high-volume content but lack the flexibility of Telegram’s third-party tools. For instance, news platforms can’t easily customize moderation rules for niche communities like a Telegram group for local birdwatchers.

Real-World User Experiences

User feedback highlights stark contrasts between the two systems. In r/TelegramModerators, 68% of community managers praise Watchdog AI for catching disguised hate speech using special characters. One admin wrote: "It understands context where older bots failed-blocking 92% of coded slurs in our crypto group." However, 32% complain about false positives in niche communities. A small gardening group saw 15% of legitimate plant-care advice flagged as "hate speech" due to keyword matches.

News platform comment sections tell a different story. Trustpilot reviews for The Guardian show an average 3.1/5 stars, with users frustrated by over-filtering. A typical complaint: "I posted a factual comment about climate policy, and it was blocked for 'toxic language'." Conversely, The Washington Post’s implementation of Google’s Perspective API reduced toxic comments by 76% but increased moderator workload by 22% due to false positives. This trade-off shows how even advanced systems struggle to balance safety and free expression.

Regulatory and Market Context

Regulatory pressures are reshaping both models. Telegram’s 800 million active users (per Q4 2025 transparency report) make it a target for global regulators. The EU’s Digital Services Act requires platforms to prove they can identify illegal content effectively. Telegram’s decentralized model has drawn criticism-EU officials warned in February 2026 that "decentralized moderation must demonstrate equivalent protection to centralized systems by Q3 2026 or face restrictions." Meanwhile, mainstream news platforms must publish transparency reports with 95% accuracy in illegal content detection under the same law.

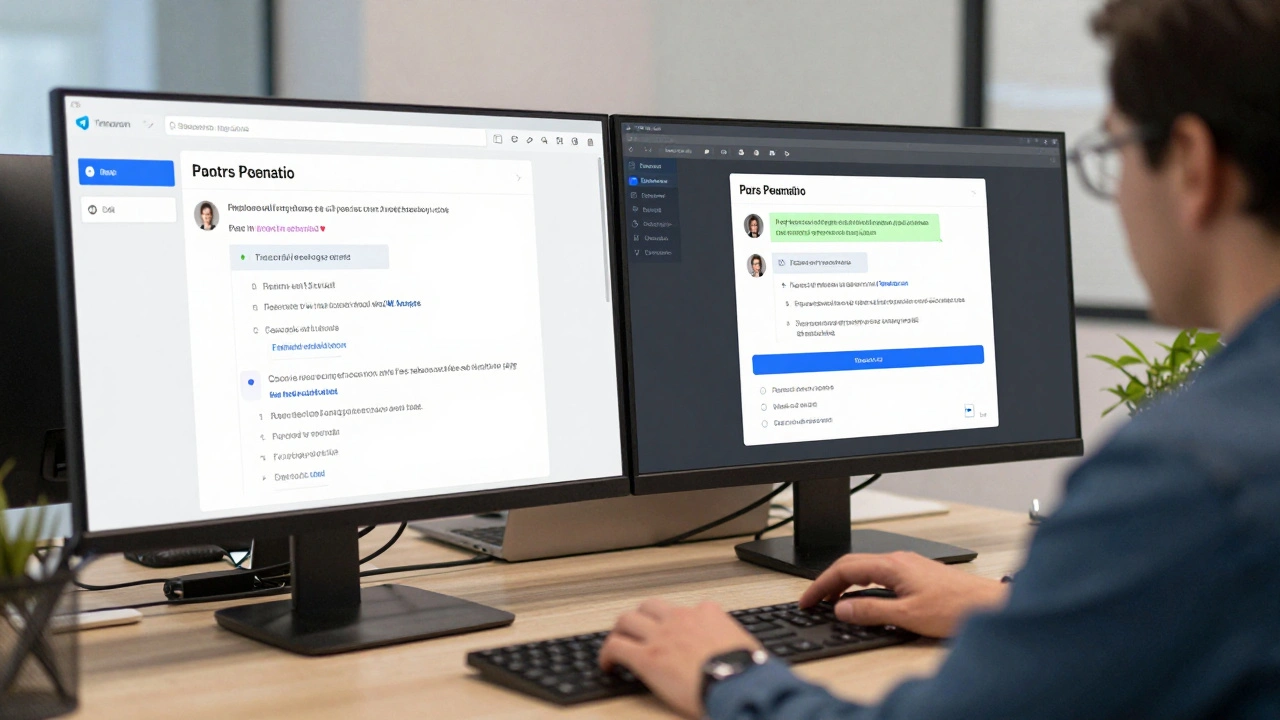

Market trends show convergence. In December 2025, Telegram announced "Editorial Verification" for news channels, allowing fact-checking by trusted publishers. The Washington Post launched a Telegram channel with community moderation features in January 2026. Gartner predicts by 2027, "the distinction between social platform and news platform moderation will blur as both adopt hybrid human-AI approaches." Yet fundamental differences remain: Telegram still can’t moderate private chats proactively, while news platforms maintain pre-publication review as their core strength.

Which Model Fits Your Needs?

Choosing between Telegram and mainstream news moderation depends on your goals. If you run a community group with niche interests, Telegram’s flexible tools let you customize rules without technical expertise. Watchdog AI’s real-time monitoring works well for 5,000+ member groups, reducing moderator workload by 30%. However, if you need consistent, legally compliant content-like a news organization-mainstream platforms’ editorial rigor is unmatched. The New York Times’ 120 hours of training for new editors ensures every piece meets strict standards before publication.

For most community managers, Telegram’s third-party tools offer the best balance of control and automation. However, if your audience expects professional journalism standards-like a verified news channel-mainstream platforms’ pre-publication review provides the necessary safeguards. The key is understanding your priorities: flexibility for community building versus consistency for professional publishing.

Frequently Asked Questions

Does Telegram moderate private chats?

No. Telegram only scans public groups for illegal content. Private chats and secret chats remain private unless a user reports them. This design prioritizes user privacy but creates gaps in moderation-harmful content in private chats can go unchecked until reported.

How accurate is Watchdog AI for hate speech detection?

Watchdog AI claims 94% accuracy in detecting contextual hate speech by analyzing special characters and linguistic patterns. This is higher than traditional bots, which only detect exact wording matches with 87% accuracy. However, accuracy drops for low-resource languages like Swahili or Tagalog, where it falls to 72.1%.

Why do news platforms have higher moderation consistency than Telegram?

News platforms use centralized editorial teams with standardized guidelines. The New York Times’ style guide ensures 98.7% consistency in moderation decisions, as measured by Columbia Journalism Review. Telegram’s decentralized model relies on individual group admins, leading to 47% variance in rule enforcement across similar communities.

Can Telegram handle real-time comment moderation better than news platforms?

Yes. ESET’s 2025 study found Telegram’s AI moderation tools identify contextual hate speech in comments 32% more effectively than news platforms’ systems. This is because Telegram processes content post-publication with AI, while news platforms often rely on slower human review for comments. However, news platforms excel at pre-publication review for articles, catching 99.4% of harmful content before it’s published.

What regulatory challenges does Telegram face?

The EU’s Digital Services Act requires platforms to prove effective illegal content detection. Telegram’s decentralized model makes compliance difficult-regulators argue it can’t guarantee consistent enforcement. In February 2026, the EU warned Telegram must demonstrate equivalent protection to centralized systems by Q3 2026 or face restrictions. This pressure is pushing Telegram to adopt more centralized features like "Editorial Verification" for news channels.