For years, Telegram was known as the wild west of messaging. Founded by Pavel Durov, it promised end-to-end encryption and zero moderation for private chats. If you were a journalist or editor monitoring sources there, you assumed that what happened in a group chat stayed there-unseen by any moderator. That assumption is no longer safe. As of May 2026, the platform has quietly but significantly overhauled its policies. The old disclaimer stating that private messages are not subject to moderation? Gone. In its place is a clear directive: all areas of the app, including private communications, can be flagged for illegal content.

This shift matters deeply for newsrooms. You might encounter disturbing material while investigating a story, verifying sources, or monitoring public discourse. Knowing how to act quickly and correctly isn't just about compliance; it's about protecting your staff and ensuring that genuinely harmful material gets removed without exposing your operation to risk. Here is exactly how to navigate these new rules.

The Shift in Policy: What Changed in 2026?

To understand the current landscape, you have to look at what disappeared from the fine print. Historically, Telegram’s FAQ explicitly stated that content in private and group chats remained between participants. This created a legal gray area where users felt shielded from accountability. Now, the FAQ section titled "There's illegal content on Telegram. How do I take it down?" confirms that every version of the app-Android, iOS, Desktop-features 'Report' buttons designed to flag violations to moderators.

This change reflects a broader trend in platform governance. Regulatory pressure across Europe and other regions has forced even privacy-focused platforms to adopt more robust takedown mechanisms. For a newsroom, this means the barrier to reporting lower than before, but the responsibility to report accurately is higher. You aren't just clicking a button; you are triggering a review process that can result in account deletion or channel suspension.

Step-by-Step Reporting Mechanisms

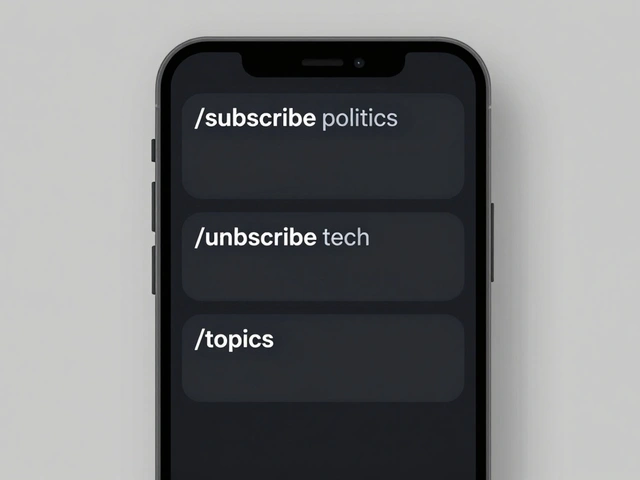

You need to know which tool to use based on your device and the type of content. Telegram offers multiple paths, and using the wrong one can delay action.

In-App Reporting (The Fastest Route)

- On Android: Tap the specific message you want to report. A menu will appear. Select "Report."

- On iOS: Press and hold the message. Choose "Report" from the pop-up options.

- On Desktop: Right-click directly on the message to access the context menu, then select "Report."

Reporting Channels or Groups

If the violation is systemic within a community rather than a single message, you target the entity itself. Navigate to the channel or group page. Tap the name or profile picture at the top to open the info panel. Click the three-dot menu button ("...") and choose "Report." You will be asked to specify the reason, such as spam, violence, or pornography.

Email Reporting (For Complex Cases)

Sometimes the in-app tools don't capture the nuance of a violation, especially if you are documenting a pattern of behavior. In these cases, direct email to Telegram's abuse team is more effective. Always include direct links (e.g., t.me/username) to make their job easier.

| Type of Violation | Contact Email / Bot | Best Used For |

|---|---|---|

| General Illegal Content / CSAM | [email protected] |

Broad reports of harm, including child sexual abuse material. |

| Child Abuse Takedowns Only | [email protected] |

Specific requests focused solely on removing CSAM. |

| Copyright Infringement | [email protected] |

Pirated media, unauthorized sticker sets, or stolen articles. |

| Scams & Impersonation | @NoToScam bot | Fraudulent accounts pretending to be officials or brands. |

| Harmful Search Terms | @SearchReport bot | Keywords used to find illegal content in specific regions. |

What Gets Removed? Defining "Illegal" on Telegram

Not everything you dislike qualifies as illegal content. Telegram distinguishes between subjective offense and objective illegality. Understanding this distinction prevents your reports from being ignored.

The platform actively removes and investigates several categories of material. These include Child Sexual Abuse Material (CSAM), which carries a zero-tolerance policy. Since 2018, Telegram has automatically checked public images against hash databases of banned CSAM. In 2024, they expanded this by incorporating hashes from international bodies like the Internet Watch Foundation. Between September and October 2024 alone, they processed over 17,500 new CSAM reports from Stichting Offlimits, banning all reported content immediately.

Other reportable categories include:

- Non-consensual intimate imagery: Sharing nude photos or videos without consent.

- Incitement to violence: Calls for physical harm against individuals or groups.

- Trading of illegal goods: Markets for drugs, weapons, or other prohibited items.

- Hate speech: Targeting people based on protected characteristics.

- Misinformation: False claims spread through public channels that pose significant societal risks.

Note that political disagreement or controversial opinions generally do not fall under "illegal content" unless they cross into incitement or hate speech. Focus your reports on clear violations of law or platform terms.

Protecting Your Source and Staff

One of the biggest fears for journalists is retaliation. Will the person you report know it was you? No. Telegram’s system is designed to keep reporters anonymous. When you submit a report via the app or email, the subjects of the report-whether they are group admins, channel members, or individual users-are never notified of who filed the complaint. They only see the consequence, if one occurs.

However, anonymity doesn't mean invisibility. If you are part of a group and report another member, ensure you haven't tipped them off beforehand. Avoid discussing the report in the same chat. Furthermore, remember that Telegram’s moderation team does not typically send confirmation emails for general reports. Lack of response does not mean lack of action. It means the case is being processed internally.

Leveraging AI and Proactive Moderation

You are not fighting this battle alone. Telegram has deployed some of the most comprehensive moderation infrastructure in the industry. Since 2015, they have combined user reports with machine learning algorithms. By early 2024, they integrated advanced AI tools to scan for violations proactively.

This means that even if you don't report something, the system might catch it. Pavel Durov has publicly stated that Telegram takes down millions of harmful posts and channels daily. The platform blocks tens of thousands of groups every day. For newsrooms, this implies that persistent violators are likely already on Telegram's radar. Your report adds weight to an existing case, potentially accelerating takedown times for repeat offenders.

Best Practices for Newsroom Operations

To integrate this into your editorial workflow, consider these guidelines:

- Document Before Reporting: Take screenshots or save metadata before reporting. Once content is removed, it may display as "This channel/message cannot be displayed," leaving you without evidence for your story.

- Be Specific: When emailing [email protected], include the exact nature of the violation. Vague complaints get lost. Provide handles (@username) and direct links.

- Avoid Mass Reporting: Do not spam the abuse team with duplicate reports for the same content. Submit comprehensive, well-documented cases. Ethical mass reporting helps; harassment of support lines hurts.

- Train Your Team: Ensure all reporters and editors know the difference between DMCA (copyright) and Abuse (illegal/harmful) contacts. Using the wrong address delays resolution.

The evolution of Telegram’s policy shows a move toward greater transparency and accountability. Daily transparency reports on CSAM removal provide concrete data on the scale of these efforts. For newsrooms, these tools are resources for maintaining a safer digital environment and supporting ethical journalism practices.

Will the person I report know it was me?

No. Telegram keeps the identity of the reporter confidential. The reported user or admin will not receive any notification indicating who filed the report. They will only see if their content is removed or their account is restricted.

Can I report content in a private secret chat?

Secret chats on Telegram use end-to-end encryption, meaning Telegram cannot read the content. However, you can still report the user themselves for suspicious behavior. Note that Telegram cannot remove specific messages from secret chats because they do not have access to them. You should block the user and report their account handle.

What is the best way to report copyright infringement?

For copyright issues, such as pirated videos or stolen articles, use the dedicated email address [email protected]. Include details about the copyrighted work, the infringing link, and your contact information. Do not use the general abuse email for copyright matters.

Does Telegram moderate private group chats now?

Yes. As of 2026, Telegram has updated its policies to allow reporting of illegal content in private groups and chats. While they do not proactively monitor encrypted secret chats, they do process reports for illegal activity in standard private groups and channels.

How long does it take for Telegram to remove illegal content?

Response times vary. Automated systems often remove obvious violations like CSAM instantly. Human-reviewed cases may take anywhere from a few hours to several days. Telegram does not guarantee specific turnaround times for non-emergency reports.