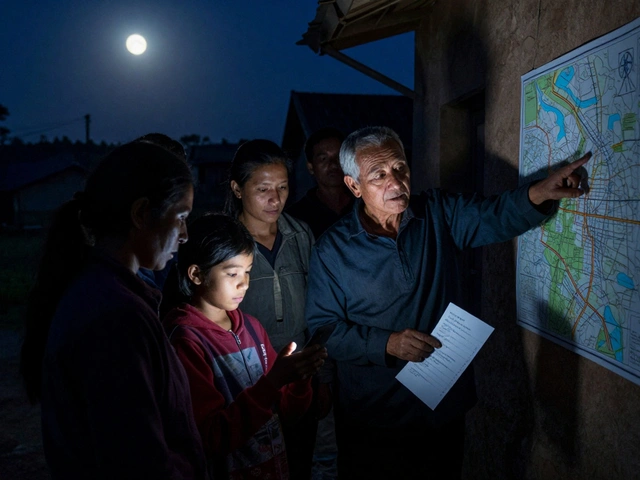

Large Telegram news groups can have tens of thousands of members. When a breaking story drops-say, a major earthquake or political scandal-hundreds of messages flood in every minute. Some are real. Many are false. Without someone watching, these groups turn into chaos. That’s where volunteer moderators come in. They’re not paid. They don’t have fancy titles. But they’re the reason many of these groups still feel reliable, even when the news is spinning out of control.

Why Volunteer Moderators Are Necessary

Telegram groups can grow beyond 5,000 members, and news channels can have unlimited subscribers. Official team members can’t be online 24/7. That’s not realistic. So groups turn to their own members-people who care enough to step up. These volunteers handle the day-to-day mess: deleting spam, flagging fake images, banning trolls, and verifying facts before a rumor spreads. According to a November 2024 analysis of 350+ Telegram news channels, 68% now rely on volunteer moderators. Why? Because automated bots alone fail. A bot might flag a legitimate news link about Gaza because it contains the word “casualties.” A human can check the source, cross-reference with UN OCHA, and decide if it’s real. That’s the difference.How Volunteer Moderator Programs Work

Successful programs don’t just recruit anyone. They follow a process:- Recruitment - Groups look for active members who’ve been around for months, not just people who post once a week. Many use public applications or invite trusted users directly.

- Vetting - Candidates take a sample moderation test. They’re given a mix of real news, fake images, and inflammatory comments and asked to sort them. Background checks aren’t formal, but moderators are often asked about past community behavior.

- Onboarding - A 7-day training covers 12 core skills: how to use Telegram’s admin tools, how to handle heated arguments, how to use fact-checking tools, and what to do when a moderator disagrees with another.

What Skills Do Volunteer Moderators Need?

You can’t just be a loud person who hates spam. Effective moderators have:- Digital literacy - 98% of programs require basic research skills. Can you reverse-image search a photo? Can you find the original source of a news report?

- Emotional intelligence - 87% need conflict resolution training. You’ll get yelled at. You’ll be called a censor. You need to stay calm and stick to the rules.

- Subject knowledge - A Ukraine news group needs someone who understands the timeline of the war. A Gaza group needs someone who knows the difference between IDF statements and Hamas claims.

What Goes Wrong?

Not all programs succeed. Here’s where they break down:- Inconsistent enforcement - 61% of groups struggle with this. One moderator bans someone for saying “corrupt government.” Another lets it slide. That erodes trust fast.

- Burnout - The average volunteer lasts just 4.2 months. Without recognition, people quit. Some groups now give out “Verified Moderator” badges on Telegram. Those groups see volunteers stay 3.2 times longer.

- Coordinated attacks - In November 2024, a group in Russia had three moderators hacked at once. The attackers changed settings, deleted rules, and flooded the group with pro-war propaganda. It took 11 hours to fix.

- Lack of guidelines - Only 29% of news groups have written moderation rules. If you don’t know what’s allowed, how can you enforce it?

Volunteers vs. Professional Moderators

Some groups hire professionals. Watchdog AI’s enterprise plan costs $1,200/year. But that’s still cheaper than hiring a full-time mod. Still, there’s a trade-off.Volunteer-moderated groups have 43% fewer misinformation incidents than those using only bots. But they have 28% more inconsistent rule enforcement than teams with paid staff.

Professional moderators follow strict playbooks. Volunteers rely on gut feeling. That’s fine for routine cleanup. But in a crisis? It’s dangerous.

On November 3, 2024, a 12,000-member Ukraine news group delayed a critical safety alert by 22 minutes. Why? The moderators were debating whether the alert was “official enough” to post. A paid team would’ve pushed it through immediately.

Success Stories

Not all stories are grim. On November 10, 2024, a 15,000-member Gaza news group identified and removed 217 fake casualty reports in under three hours. Volunteers cross-checked every photo and name with UN OCHA, Reuters, and local hospitals. No bot could’ve done that. Only humans with local knowledge and verified sources could.Another group in India, with 8,000 members, reduced hate speech by 70% after introducing a “feedback loop.” Every time someone was banned, they got a private message explaining why-with links to the rule they broke. That simple change cut appeals by 80%.

What’s Changing in 2025?

Telegram updated its admin tools in November 2024. Now groups can pin moderation rules so everyone sees them. There’s better delegation-admins can assign specific powers to different volunteers. Watchdog AI now integrates with Reuters and AP fact-checking APIs. That means when a volunteer flags a claim, the bot can instantly pull up verified responses.Experts predict that by mid-2025, 70% of large news groups will use AI to handle 80% of routine tasks-spam, duplicate posts, obvious scams. That leaves volunteers free to handle the hard stuff: interpreting context, spotting subtle bias, deciding when a rumor is dangerous even if it’s not technically false.

Should You Start One?

If you run a Telegram news group with over 5,000 members, and you’re tired of cleaning up lies every day-yes, you should. But don’t just grab the first person who volunteers.Start small. Pick three people you trust. Give them clear rules. Train them for a week. Use Watchdog AI or a similar bot. Track how many false posts get caught. Measure retention. If your volunteers stick around past 90 days, you’re doing something right.

And remember: recognition matters. A simple “Verified Moderator” badge on Telegram. A shout-out in your weekly update. A thank-you note. These cost nothing. But they keep people coming back.

Large Telegram news groups aren’t going away. Misinformation isn’t either. The people who step up to moderate them aren’t heroes. They’re just tired of seeing lies spread. And that’s enough to make a difference.

How many volunteer moderators does a large Telegram news group need?

Experts recommend at least 3 volunteer moderators for every 1,000 active members. For a group with 10,000 members, that means 30 moderators. But it’s not just about numbers-it’s about coverage. You need people awake in different time zones. If your group has members in Ukraine, India, and Brazil, you need moderators in each region to respond within 10 minutes.

Can Telegram bots replace volunteer moderators?

No. Bots are great for filtering spam, blocking known fake links, and flagging keywords. But they can’t understand context. A bot might ban a post saying “The hospital was bombed” because it contains “bombed.” A human can check if it’s from a verified source like the WHO. Volunteer moderators handle the gray areas bots can’t. The most effective groups use bots as assistants, not replacements.

What’s the biggest mistake new volunteer programs make?

Not writing down the rules. If moderators don’t have clear, written guidelines, they make decisions based on personal bias. One might ban political opinions. Another might ignore hate speech. That creates chaos. Successful groups have a public document listing exactly what’s allowed, what’s not, and what happens if you break the rules.

Do volunteer moderators get paid?

Almost never. Volunteer moderators in Telegram news groups are unpaid. Some groups offer small perks-like early access to reports, a custom emoji, or a “Verified Moderator” badge. But no group pays a salary. That’s why burnout is so common. Recognition, not money, keeps them going.

How do you prevent moderator abuse?

Use transparency. All moderation actions should be logged in a private channel visible to other admins. If someone bans a user, they must leave a note explaining why. Some groups rotate moderation shifts weekly so no one has too much power. Others require two moderators to approve a ban. And always, always let users appeal decisions-privately, with a clear process.

Is Telegram officially supporting volunteer moderators?

Telegram doesn’t run or certify volunteer programs. But they’ve added tools to help. Since November 2024, admins can pin moderation rules, assign granular permissions, and use improved delegation features. CEO Pavel Durov has said they’re empowering community leaders-but they’re not stepping in to manage them. The responsibility stays with the group.