Imagine a news channel on Telegram that looks perfectly normal. It has thousands of followers, a professional logo, and posts updates every hour. But behind the scenes, the "people" commenting and sharing the posts aren't actually individuals. They are a synchronized army of fake accounts, some managed by a single person and others by a bot, all working together to push a specific political narrative or a conspiracy theory. This isn't just a few trolls in a basement; it's a high-resource operation designed to trick you into believing a lie is a popular opinion. This is what experts call Coordinated Inauthentic Behavior (CIB), and in the wild west of Telegram news communities, it's becoming a massive problem.

The core issue with CIB is that it doesn't rely on a single "bad" account. Instead, it uses a network. By mixing real people with fake personas, these operators create an illusion of organic support. If you see ten different accounts all praising the same fringe news article within five minutes, you're more likely to trust that article. However, when AI steps in, these patterns-which look natural to a human-become glaringly obvious.

What exactly is Coordinated Inauthentic Behavior?

To get a handle on this, we first need to define the enemy. Coordinated Inauthentic Behavior is a tactic where groups of accounts work together to mislead people about who they are and what they believe. Unlike a simple bot that spams a link, CIB is about orchestration. It's the difference between one person shouting in a room and a hundred people, all paid by the same boss, pretending they just happened to arrive at the same time to say the exact same thing.

CIB often involves the creation of elaborate personas. Operators might pose as independent journalists, academic researchers, or regional editors. They build fake news websites that look legit, then use Telegram to drive traffic to those sites. The goal isn't just to spread a lie, but to manipulate the public debate around critical societal issues. For instance, during the 2024 U.S. Election, researchers found Russian-affiliated media being systematically pushed through these types of coordinated networks on both Telegram and X.

Why Telegram is a Playground for Manipulation

Telegram is a goldmine for these operations because of its unique features. While the public channels are where the propaganda lives, the coordination happens in the shadows. Telegram offers secret chats with self-destructing messages. This allows malicious actors to send orders, share pre-written scripts, and train their "agents" without leaving a digital paper trail for investigators.

Furthermore, the platform's lenient moderation compared to Facebook or Instagram makes it easier to scale these networks. In Moldova, for example, networks were discovered using fake accounts managed through Telegram to target Russian-speaking audiences. They didn't just use text; they used Generative Adversarial Networks (GANs) to create hyper-realistic profile photos for accounts that don't actually belong to real people. This makes it nearly impossible for a regular user to tell the difference between a real person and an AI-generated ghost.

How AI Detects the "Invisible" Coordination

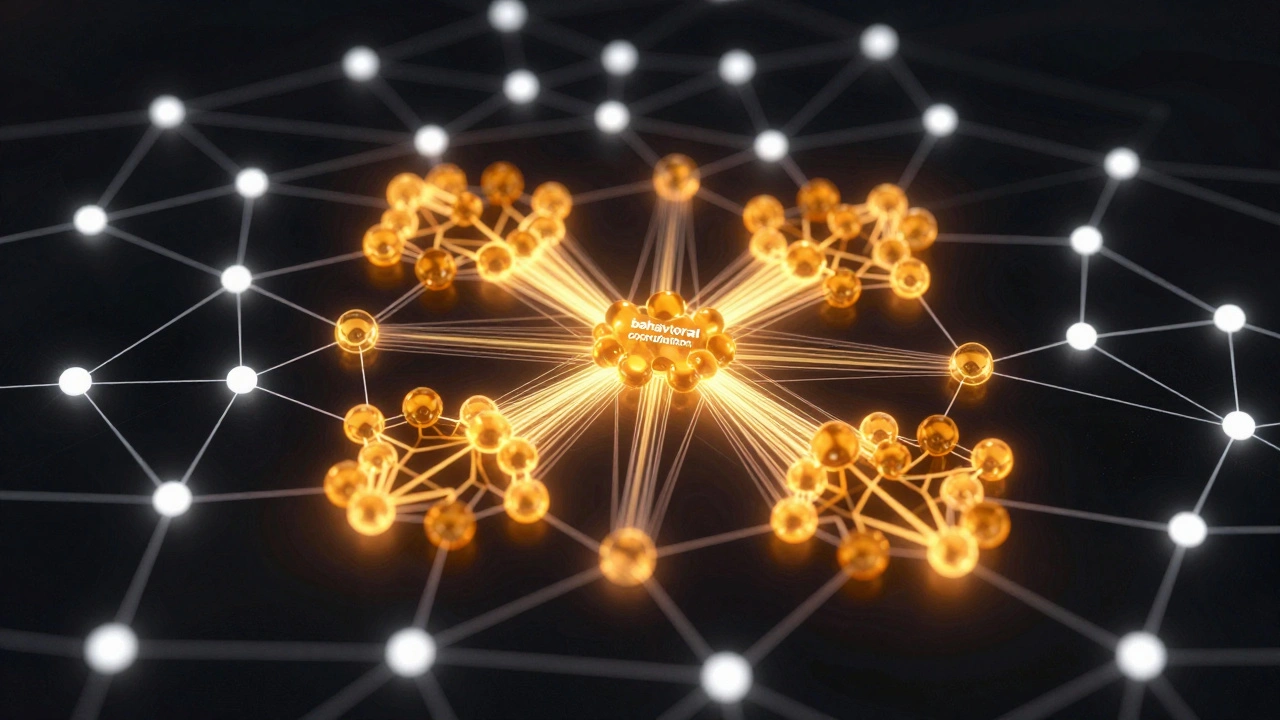

If you only look at one account, it might look fine. It posts occasionally, likes a few things, and follows a few people. The secret to catching CIB is to stop looking at individuals and start looking at the network. This is where AI-driven network analysis comes into play. Instead of checking "Is this account a bot?", AI asks "Are these fifty accounts behaving like a single organism?"

Modern detection frameworks use similarity networks. These AI models track how content moves across a community. If a specific, low-credibility news link is shared by twenty accounts within a tight window of time, and those twenty accounts always share the same set of five other links, the AI flags this as a coordinated cluster. This is called behavioral coordination. It's less about *what* they are saying and more about *how* they are saying it and *when* they are saying it.

| Feature | Traditional Detection | AI Network Analysis |

|---|---|---|

| Focus | Individual account attributes (e.g., post frequency) | Community-wide sharing patterns |

| Detection Method | Keyword flagging and bot-like timing | Similarity networks and temporal clusters |

| Evasion Resistance | Low (Easy to mimic human timing) | High (Hard to hide collective movement) |

| Scope | Single platform | Cross-platform correlation |

The Multi-Layered Approach to Spotting Fakes

No single AI tool can stop all CIB because the attackers are always evolving. To actually protect a news community, a multi-layered defense is required. You can't just rely on a "bot detector"; you need a system that combines several different types of analysis.

- Network Analysis: Identifying suspicious sharing patterns and clusters of accounts that always move in tandem.

- Behavioral Analysis: Detecting temporal coordination-when a group of accounts suddenly "wakes up" and floods a topic at the exact same second.

- Content Analysis: Using semantic AI to see if the phrasing is too similar across different accounts, suggesting they are using a shared script.

- Account Analysis: Looking for markers like rapid name changes or GAN-generated profile images.

- Cross-Platform Correlation: Checking if the same coordinated patterns are appearing on X, Facebook, and Telegram simultaneously.

For example, in the case of Macedonian fake-news empires, operators used multiple inauthentic accounts to push for-profit narratives. By analyzing the traffic, researchers could see that these accounts weren't just sharing news; they were strategically driving users toward a centralized resource designed to make money from ad revenue. The AI didn't just find bots; it found a business model for misinformation.

The Cat-and-Mouse Game: Evasion and Evolution

As AI gets better at detecting CIB, the operators get better at hiding. We are seeing a shift toward "hybrid networks." Instead of using 100% bots, they hire real people-sometimes in "click farms"-to manage the accounts. This adds a layer of human randomness that can trick basic AI models. They also use Machine Learning themselves to optimize their posting schedules to avoid detection thresholds.

One of the biggest challenges is distinguishing between legitimate news coordination and inauthentic behavior. For instance, a group of passionate activists might all share the same news story at once because they genuinely care about the cause. That's organic coordination. CIB is *inauthentic* coordination. The difference lies in the identity of the accounts and the source of the content. This is why semantic analysis of source credibility is so vital. If the "activists" are all using profiles created three days ago with AI-generated faces, it's a CIB operation.

What's Next for Telegram News Communities?

The future of fighting CIB isn't just better code; it's better cooperation. Because these networks operate across platforms, a company like Meta can find a network on Facebook and share that intelligence with other platforms to help them spot the same patterns on Telegram. Without this cross-platform intelligence, the operators just move their base of operations to whichever platform has the weakest defenses.

We can expect AI to move toward real-time monitoring. Instead of analyzing a campaign after it has already swayed an election, future systems will likely flag coordinated spikes in real-time, allowing moderators to pause the spread of a narrative until the authenticity of the accounts can be verified. The goal isn't to stop people from sharing news, but to ensure that the "conversation" isn't being faked by a coordinated machine.

Can a regular user spot Coordinated Inauthentic Behavior?

It's difficult, but there are clues. Look for a group of accounts that all post the same links or phrases within a short time. Check their profile pictures; if they look a bit too "perfect" or have weird artifacts around the ears/background, they might be GAN-generated. Also, look at their history-if an account only posts about one specific political topic and has no other interests, it's a red flag.

Why is Telegram specifically targeted for CIB?

Telegram's combination of massive public channels and highly secure, private, self-destructing chats makes it ideal. Operators can broadcast propaganda to millions while coordinating their secret movements in a way that is very hard for law enforcement or platform moderators to track.

Does AI always get it right when flagging CIB?

No. There is always a risk of "false positives." For example, a viral news story might naturally cause a spike in sharing that looks like coordination. This is why experts use a multi-layered approach-combining network analysis with account verification and semantic content checks-to reduce errors.

What are GANs and how are they used in these networks?

Generative Adversarial Networks (GANs) are a type of AI that can create realistic images from scratch. In CIB operations, they are used to create profile photos for fake accounts. Since these faces don't belong to real people, they don't appear in reverse image searches, making the fake account look like a unique, real individual.

Is CIB the same as a botnet?

Not exactly. A botnet is a technical collection of compromised computers. CIB is a broader strategy. CIB can use bots, but it also uses real people paid to post, fake personas managed by humans, and a mix of both. It's about the *behavior* of the group, regardless of whether the account is a bot or a human.

Next Steps for Community Admins

If you run a Telegram news community and want to protect it, don't just rely on a single bot. Start by diversifying your moderation strategy. Use tools that flag rapid-fire joining of new members from similar regions. Encourage your community to report suspicious patterns rather than just single posts. Most importantly, stay updated on cross-platform trends; if a specific narrative is being flagged as a CIB operation on other platforms, be on high alert for it appearing in your channels.