Telegram launched its first AI-powered summary feature in January 2026, promising to help users skim through long posts faster. But behind the convenience lies a quiet crisis: accuracy and bias are slipping through the cracks, and no one’s checking. The feature, powered by a decentralized network called Cocoon, runs on the TON blockchain and claims to protect your privacy. But privacy doesn’t fix wrong facts. And right now, it’s making some summaries dangerously misleading.

How Telegram’s AI Summaries Actually Work

Telegram’s AI summaries appear at the top of long channel posts. Tap them, and you get a condensed version-usually under 100 words. It’s designed for people scrolling through news, finance, or tech channels. The system uses open-source AI models running on Cocoon Network, a decentralized compute network where users earn TON tokens for lending their GPU power. No data leaves your device unencrypted. That’s the privacy win.

But here’s the catch: Telegram doesn’t tell you which model it’s using. Not the name, not the training data, not the version. You can’t look it up. You can’t verify it. The company says it’s optimized for speed and privacy, not transparency. According to their own technical notes, summaries generate in about 1.8 seconds per 1,000 words. That’s fast. But speed doesn’t mean correctness.

The Accuracy Problem Is Real

Users are noticing errors. In Reddit threads and Trustpilot reviews, people report critical mistakes in financial summaries, programming tutorials, and medical advice. One user, u/DeveloperDave, found three out of five code summaries contained wrong syntax that would break the actual program. Another user summarized a health article about insulin dosing-and the AI cut out the warning about hypoglycemia.

Independent tests by MIT’s computational linguistics team found similar open-source models used in decentralized systems have 15-22% factual error rates when handling complex news. Telegram hasn’t released any accuracy benchmarks of its own. Compare that to WhatsApp’s Meta AI, which publishes quarterly reports showing 82% accuracy. Telegram doesn’t even try.

Even worse, accuracy drops sharply for non-English content. Over 40% of users on Telegram’s feedback channel said they’d use the feature more if summaries in Spanish, Arabic, or Hindi improved by just 20%. Right now, those summaries often miss cultural context, mistranslate idioms, or flatten nuanced arguments into oversimplified claims.

Bias Isn’t an Accident-It’s Built In

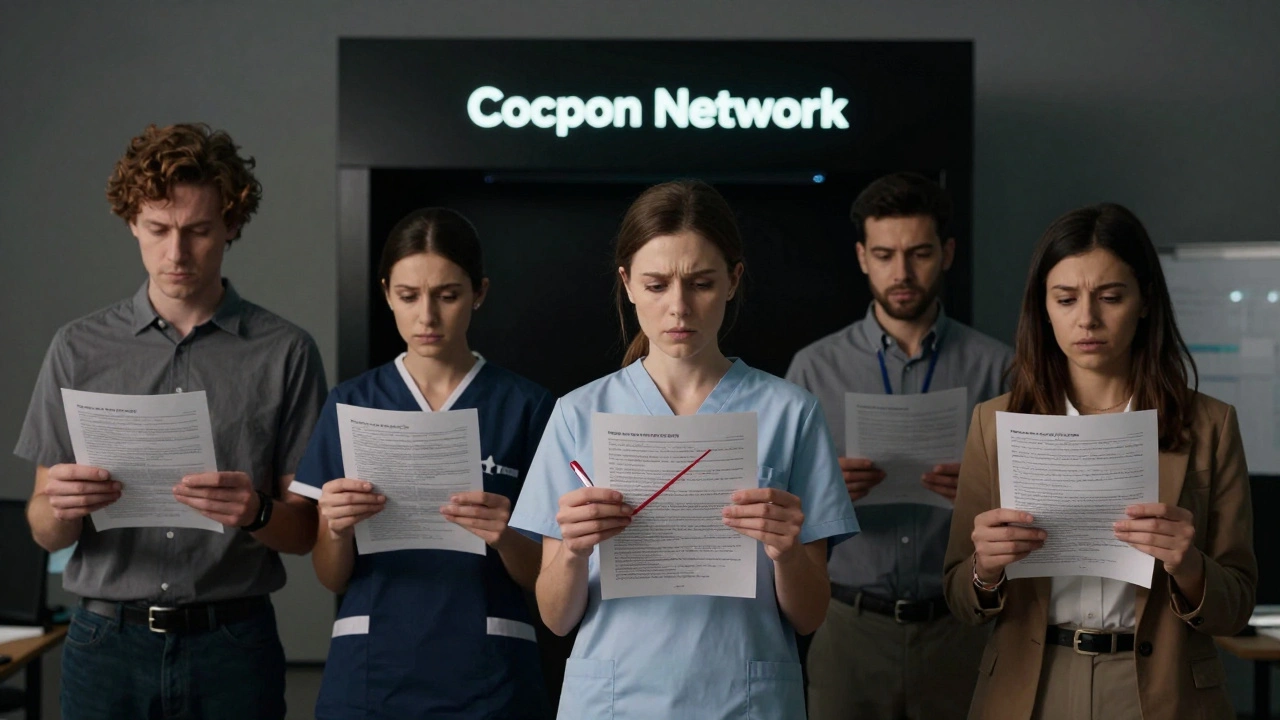

Privacy-focused doesn’t mean unbiased. Cocoon’s decentralized structure means anyone with a GPU can contribute to training or running the model. That sounds democratic. In practice, it’s chaotic. There’s no central team reviewing data quality. No one filtering out propaganda, extremist rhetoric, or skewed political framing.

Users report systematic bias. A political article from a conservative outlet got summarized to omit key quotes from right-leaning commentators. A report on immigration policy left out data from minority communities entirely. Trustpilot reviews show only 32% of users trust the summaries for opinion-heavy or social justice topics.

Dr. Elena Rodriguez from Stanford’s Center for AI Safety says this is the hidden risk of decentralized AI: “You lose control over training data, and suddenly, bias isn’t a bug-it’s a feature of the network’s design.” If the people running the nodes tend to share certain views, the model learns to reflect them. And since Telegram won’t disclose the data sources, you can’t even spot the pattern.

Who’s Affected the Most?

It’s not just casual users. Journalists, researchers, and small business owners rely on Telegram for real-time updates. A financial advisor using AI summaries to track market trends might miss a key earnings warning. A nonprofit worker summarizing policy changes for their community could accidentally spread misinformation.

Enterprise adoption is near zero. Only 12% of business accounts use the feature, according to Telegram’s own data. Why? Because professionals can’t risk errors. If your client depends on you to get the facts right, you can’t trust a black box.

Even Telegram’s user base is divided. While 38% enabled the feature in the first two weeks, engagement dropped to 22% among those who encountered at least one bad summary. People aren’t leaving the app-they’re just turning off AI summaries.

What’s Being Done? (Not Much)

Telegram’s response? A “Summary Feedback Program.” You can tap a thumbs-up or thumbs-down button. That’s it. No explanations. No transparency. No way to report *why* a summary is wrong. Support replies are automated: “Please use in-app feedback.” Human agents take over 58 hours to respond.

There’s talk of “bias detection modules” coming by April 2026. But no one knows what that means. Will it flag political slant? Will it cross-check facts against trusted sources? Will it let users see which parts were cut? Telegram hasn’t said.

Meanwhile, the EU’s AI Act, which went into full effect in January 2026, requires clear disclosure of automated content processing. Telegram’s current setup doesn’t meet that standard. No one’s been fined yet-but it’s only a matter of time.

What You Can Do Right Now

You can’t turn off AI summaries for specific channels. You can’t tweak the model. But you can protect yourself:

- Always check the original post before acting on a summary.

- Look for missing context-especially names, numbers, or sources.

- Compare summaries from different channels on the same topic. If they contradict each other, the AI is guessing.

- Use the feedback button, even if it feels pointless. More reports = more pressure to fix it.

- For critical info (health, finance, legal), don’t rely on AI at all.

Some power users on Reddit have started cross-referencing summaries with verified news sites. One group of 147 users reported a 65% drop in errors when they did this. It’s not perfect-but it’s the only defense left.

The Bigger Picture

Telegram’s AI summaries are a gamble. They’re betting that users will trade accuracy for privacy. But privacy without truth isn’t freedom-it’s isolation. If the summaries keep getting things wrong, especially on politics, health, and finance, they won’t just be useless. They’ll be dangerous.

Competitors like WhatsApp and Signal are moving slower, but they’re being honest about their limits. Telegram is pushing ahead with a system no one can audit. That’s not innovation. It’s negligence dressed up as privacy.

By Q4 2026, Telegram promises “near-human accuracy.” But without transparency, that’s just a slogan. And slogans don’t stop misinformation. Only accountability does.

Are Telegram AI summaries safe to trust for news?

No, not without verification. Telegram’s AI summaries have documented error rates of 15-22% in complex topics like politics, finance, and health. They often omit key context, especially in non-English content or opinion-based posts. Always check the original source before believing or sharing a summary.

Why doesn’t Telegram show how accurate its AI is?

Telegram claims its AI runs on a decentralized network called Cocoon, which prioritizes privacy over transparency. They haven’t released any accuracy metrics, training data sources, or model details. This makes independent verification impossible. Competitors like WhatsApp publish quarterly accuracy reports-Telegram does not.

Do Telegram AI summaries have political bias?

Yes, users report systematic bias, especially in political and social justice topics. Because the AI is trained on data from a decentralized network with no quality control, it often omits minority viewpoints or downplays opposing perspectives. One study found summaries of political articles frequently excluded key conservative or liberal quotes, depending on the dominant views of the node operators.

Can I turn off AI summaries on Telegram?

Not for specific channels. There’s no toggle to disable summaries per channel or per user. You can only disable the feature entirely in Settings > Chat Settings > AI Summaries. But even then, you might still see summaries on posts you didn’t request. The only reliable way to avoid them is to avoid long posts entirely or always check the original text.

Will Telegram fix the accuracy problems?

Telegram says it will improve accuracy by Q4 2026 and is testing bias detection modules. But without releasing benchmarks, training data, or third-party audits, there’s no way to verify these claims. The only pressure coming from users is through feedback buttons-which have no public impact. Until Telegram opens its system to independent review, accuracy improvements remain promises, not guarantees.

What’s Next?

If you’re using Telegram for anything important-work, research, or staying informed-you need to treat AI summaries like weather forecasts: useful for a general idea, but never a substitute for the real thing. The next big update might fix some errors. Or it might make them worse. Without transparency, you’re guessing.

The real question isn’t whether Telegram’s AI works. It’s whether you’re willing to accept a system that hides its flaws behind a banner of privacy. In a world drowning in misinformation, the most dangerous tool isn’t the one that lies. It’s the one that makes you think it’s telling the truth.