Imagine scrolling through dozens of Telegram channels, trying to keep up with breaking news, political shifts, and tech updates. For most people, it's a chaotic mess of notifications. But what if you could automatically sort every incoming message into a neat folder? That's where Natural Language Processing is a branch of artificial intelligence that helps computers understand, interpret, and manipulate human language comes in. By using NLP news classification, you can turn a noisy feed into a structured database without lifting a finger.

Whether you're a researcher monitoring public sentiment or a power user organizing a massive network of news bots, the goal is the same: moving from manual reading to automated intelligence. While it sounds like magic, it's actually a structured pipeline of data cleaning and mathematical modeling. Let's break down how you actually build this system.

The Core Pipeline for News Sorting

You can't just feed raw Telegram messages into an AI and expect a perfect result. The data is too messy. To get accurate categories, you need to follow a specific four-step workflow.

First, you have Data Collection. This is where you gather your messages. If you're building a supervised model, you'll need a labeled dataset-basically, a list of messages where a human has already said, "this is sports" or "this is politics." For those starting from scratch, public sets like the News_Category_Dataset_v3.json provide a great baseline with about 10 primary categories.

Next is Data Pre-Processing. Telegram messages are full of emojis, weird punctuation, and HTML tags that confuse models. You need to strip those away. This involves converting everything to lowercase and using techniques like Lemmatization is the process of grouping together the inflected forms of a word so they can be analyzed as a single item, reducing words to their dictionary root . For example, "running," "ran," and "runs" all become "run." This ensures the AI doesn't think they are three different concepts.

Once the text is clean, you move to Feature Extraction. Computers don't read words; they read numbers. You have to convert text into numerical representations. You might use a simple Bag-of-Words approach, or something more sophisticated like TF-IDF (Term Frequency-Inverse Document Frequency), which weights words based on how unique they are to a specific document versus the entire collection.

Finally, you hit Model Selection and Training. This is where the actual "learning" happens. Depending on your hardware, you might use a classic Naive Bayes is a probabilistic classifier based on Bayes' theorem, often used for fast and efficient text classification or a Support Vector Machine (SVM). These are great for smaller datasets and require very little computing power.

Choosing Your Topic Modeling Strategy

Sometimes you don't have labels. You just have a mountain of Telegram messages and you want the AI to tell you what the topics are. This is called unsupervised learning, specifically topic modeling.

One of the oldest reliable tools here is Latent Dirichlet Allocation is a generative statistical model that allows sets of documents to be explained by analyzing patterns of words , often abbreviated as LDA. If you use the Gensim is an open-source Python library specializing in topic modeling and document similarity library, you can implement LDA quite quickly. However, LDA struggles with very short messages-which is exactly what Telegram feeds are made of.

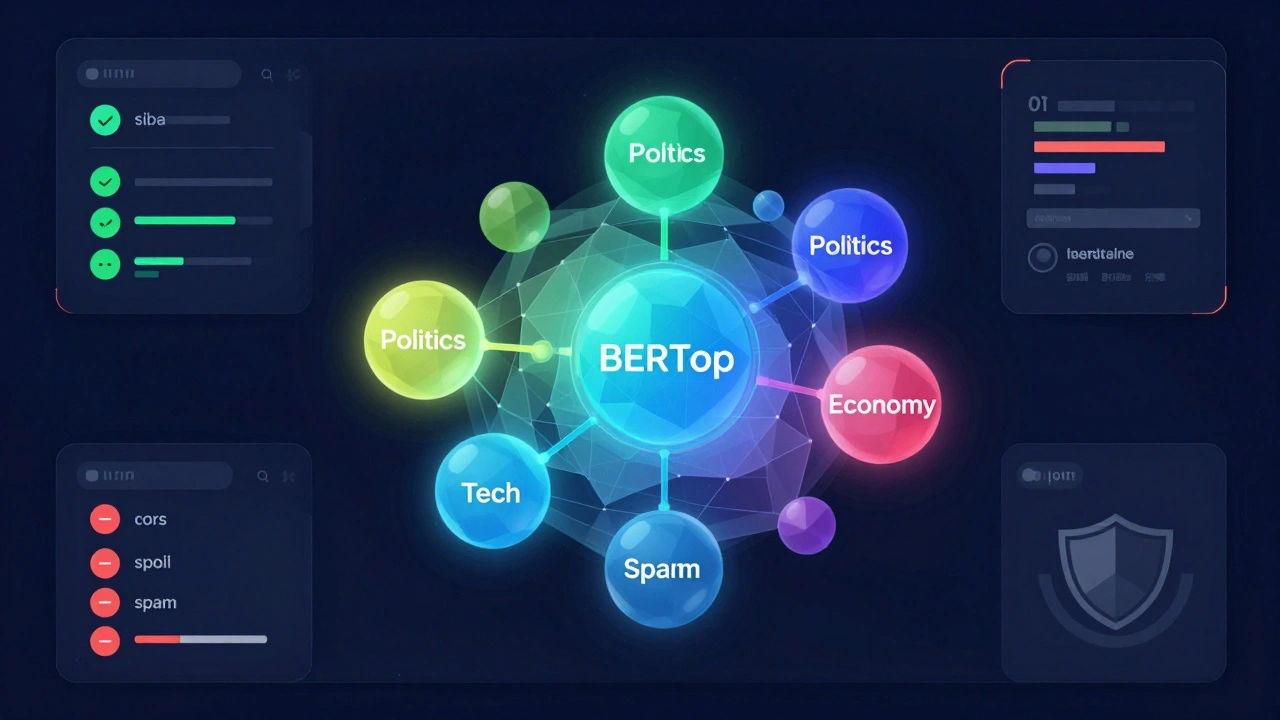

If you want a modern approach, go for BERTopic is a topic modeling technique that leverages transformer-based embeddings to create dense clusters of semantically similar documents . Unlike LDA, BERTopic understands context. It uses a combination of UMAP for reducing the complexity of the data and HDBSCAN for clustering the messages into groups. This preserves the "meaning" of the sentence much better than just counting word frequencies.

| Method | Best For | Pros | Cons |

|---|---|---|---|

| Naive Bayes | Small, labeled datasets | Extremely fast, low RAM | Ignores word order |

| LDA (Gensim) | Discovering general themes | Mathematically sound | Bad with short text |

| BERTopic | Complex, short-form feeds | High semantic accuracy | Needs more GPU power |

Dealing with the "Short Text" Problem

Telegram is a unique beast because a "news item" could be a 500-word article or a three-word alert like "Oil prices spike!" This brevity creates a problem called data sparsity. When there aren't many words, the AI has fewer clues to work with.

To solve this, researchers often use Named Entity Recognition is an NLP task that identifies and categorizes key entities in text, such as people, organizations, and locations , or NER. Using a tool like the spaCy is an advanced Python library for industrial-strength Natural Language Processing package, you can extract the "who" and "where" of a message. If a message mentions "Apple," "Cupertino," and "iPhone," the model can safely classify it as "Tech" even if the message is only ten words long.

If you're unsure how many topics you actually have, use a visualization tool called pyLDAvis. It creates a map of your topics. If two topics overlap too much on the map, they're probably the same thing, and you should reduce your total topic count. In real-world Telegram tests, personas typically end up with anywhere from 3 to 38 distinct topics depending on how niche their interests are.

Beyond Just Categories: Sentiment and Spam

Classification isn't just about the "what"; it's also about the "how." Once you've categorized a message as "Politics," you can layer on sentiment analysis. This tells you if the news is being reported as positive, negative, or neutral. Combining topic modeling with sentiment gives you a powerful dashboard: you can see not only that people are talking about the economy, but that the vibe is overwhelmingly negative.

Then there's the issue of noise. Telegram feeds are notorious for spam. You can train a binary classifier (Spam vs. Ham) as the very first step in your pipeline. If the model flags a message as spam, it gets tossed out before it ever reaches the topic classification stage. This keeps your data clean and prevents your "Business" category from being filled with fake crypto schemes.

For the most advanced users, multimodal analysis is the next frontier. This means using computer vision to analyze images or videos attached to the text. If a message has a photo of a skyscraper and the text mentions "real estate," the AI uses both signals to be 100% sure about the classification.

Common Pitfalls to Avoid

The biggest mistake beginners make is skipping the cleaning phase. If you leave punctuation and stopwords (like "the," "is," and "at") in your data, your top "topic" will just be a list of the most common English words. Always use a stopword removal list.

Another trap is overfitting. This happens when your model is so tuned to your specific training data that it can't handle new, real-world messages. To avoid this, always split your data into a training set and a testing set. If your model gets 99% accuracy on training data but 60% on new data, you've overfitted.

Finally, don't ignore the human element. AI is a tool, not a replacement. Periodically review a sample of the classified messages to ensure the AI hasn't started grouping "Apple the fruit" with "Apple the company." A quick manual audit every few hundred messages keeps the system honest.

Which Python libraries are best for Telegram NLP?

For most projects, a combination of NLTK for basic text cleaning, spaCy for named entity recognition, and Gensim or BERTopic for the actual topic modeling provides the best balance of speed and accuracy.

Can I classify news in real-time?

Yes, by integrating your NLP pipeline with the Telegram Bot API. You can set up a listener that processes every incoming message through your pre-trained model and assigns a category instantly.

Is BERTopic better than LDA?

Generally, yes. BERTopic uses transformers to understand semantic meaning, making it far superior for short messages found in Telegram feeds, whereas LDA relies on word frequency which often fails with short texts.

How do I handle multiple languages in one feed?

You should first implement a language detection layer (using libraries like langdetect). Once the language is identified, route the text to a language-specific preprocessing pipeline and model.

What is the best way to visualize the resulting topics?

pyLDAvis is the industry standard for LDA models, as it shows intertopic distance maps. For BERTopic, the built-in visualize_topics method provides an excellent interactive map of how clusters relate to one another.

Next Steps for Implementation

If you're ready to build, start by exporting a few hundred messages from your favorite Telegram channels into a CSV file. Run a basic NLTK cleaning script to see how much noise you're dealing with. If you have a GPU, jump straight into BERTopic; if you're working on a standard laptop, start with a Naive Bayes classifier to get a feel for the data.

For those looking to scale, consider moving your pipeline to a cloud environment. Processing thousands of messages per second requires more RAM than a typical PC can provide. Once your classifier is stable, you can start experimenting with sentiment analysis to add that extra layer of insight to your news feed.